https://rust-lang.github.io/rust-clippy/master/index.html#nonminimal_bool

ALL the Clippy Lints

熟悉rust的人都知道,rust社区提供了一个优秀的优化代码的编程工具,就是clippy。这个网站列举了所有的lints。

https://rust-lang.github.io/rust-clippy/master/index.html

在 Rust 中,可以使用 cargo 命令来执行静态检测,这被称为 cargo lint。它使用了一个名为 clippy 的官方代码检查工具。clippy 可以捕获潜在的错误、代码风格问题和性能反模式。

执行静态检测的命令如下:

1 | cargo clippy |

这个命令会对你的 Rust 代码进行静态分析,并输出潜在的问题和警告。

一些可选的命令行参数:

--all-targets: 检查所有目标(如库、测试等),而不仅仅是主要目标--all-features: 检查所有特性--tests: 检查测试代码--examples: 检查示例代码--benches: 检查基准测试代码-W <lint_name>: 允许控制哪些lint需要被启用或禁用

另外,你还可以将 clippy 集成到你的 CI/CD 流程中,以确保新的代码更改都能通过静态检测。

在项目的 Cargo.toml 文件中添加如下设置可以获得更严格的检查:

1 | [features] |

通过 cargo clippy 命令,你可以获得有用的建议来提高代码质量。这是 Rust 生态系统中推荐的最佳实践。

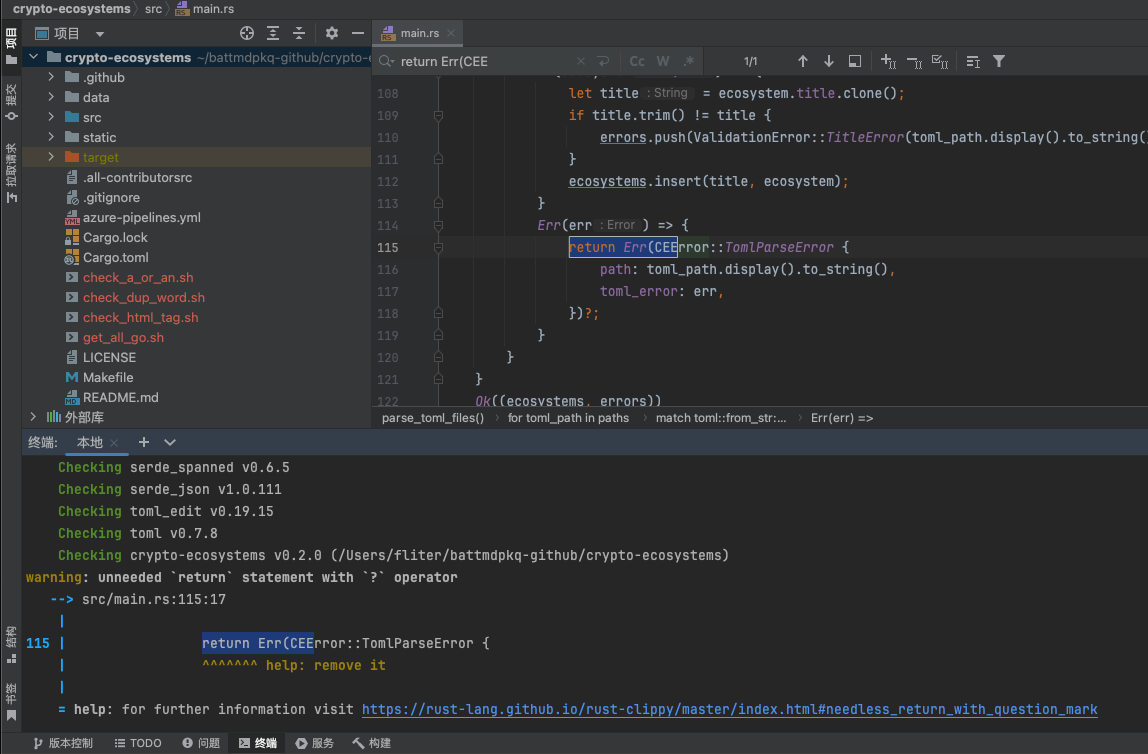

warning: unneeded return statement with ? operator

这个警告 warning: unneeded 'return' statement with '?' operator 是指在使用 ? 操作符的情况下,return 关键字是多余的。

在 Rust 中,? 操作符被用于传播错误。当它被应用于一个 Result 值时,如果该值是 Ok(x),它将返回 x;如果是 Err(err),它将从当前函数返回 Err(err)。因此,? 已经隐式地处理了返回操作。

例如,假设你有这样的代码:

1 | fn read_file() -> Result<String, io::Error> { |

这里的 return Ok(contents) 就是多余的,因为 ? 操作符已经隐含了对应的返回语义。你可以简化为:

1 | fn read_file() -> Result<String, io::Error> { |

这样的写法更加简洁,同时也避免了编译器的警告。

总的来说,解决这个警告的方法是:移除多余的 return 语句,让 ? 操作符显式地处理错误返回。这不仅使代码更加简洁,而且遵循了 Rust 的惯用写法。

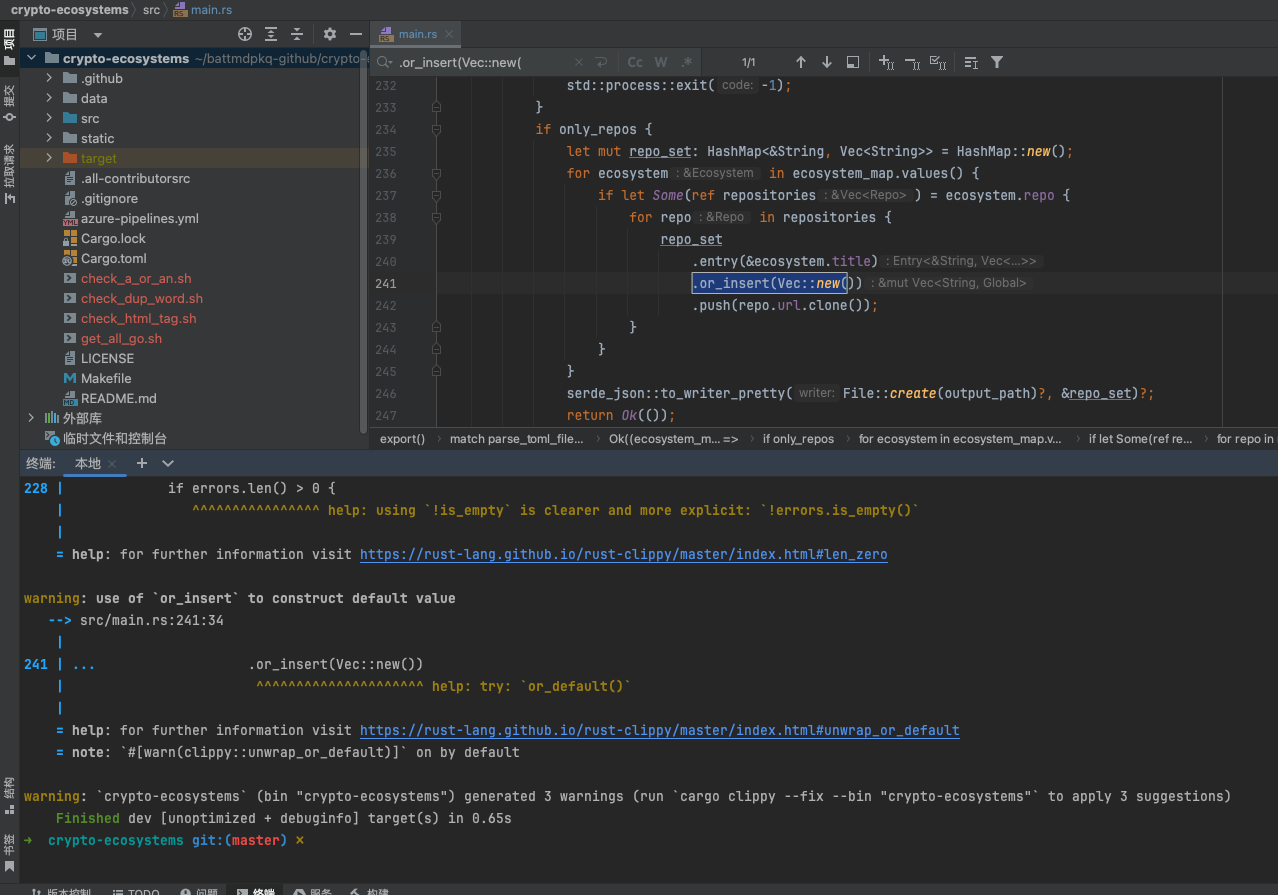

warning: use of or_insert to construct default value

这个警告是指在使用 HashMap 的 or_insert 方法时,构造默认值的方式可能不是最佳实践。

在 Rust 中,当你想要从一个 HashMap 中获取一个键的值时,如果该键不存在,可以使用 or_insert 方法来插入一个新的值。例如:

1 | let mut map = HashMap::new(); |

这段代码在 key 不存在时,会插入一个新的空 Vec。

但是,警告建议你使用 or_default() 方法来代替手动构造默认值。or_default() 方法会自动为你构造该类型的默认值。对于 Vec来说,默认值就是一个空 Vec。

所以,你可以这样修改代码:

1 | let mut map = HashMap::new(); |

这样不仅代码更简洁,而且如果将来 Vec 的默认值发生变化,你也不需要更新代码。

总的来说,使用 or_default() 方法可以让你的代码更加简洁、类型安全,并且更容易维护。

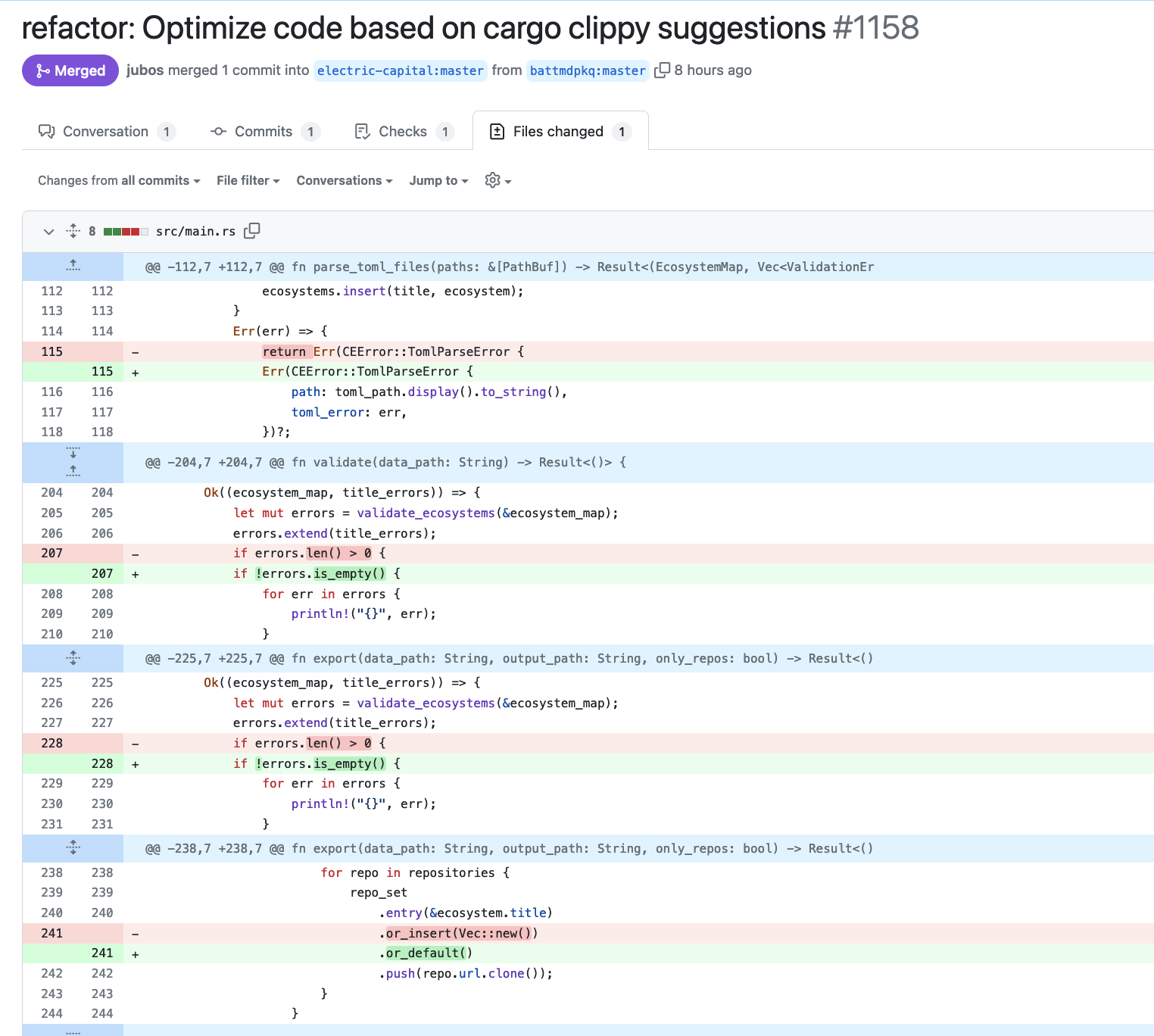

https://github.com/electric-capital/crypto-ecosystems/pull/1158/files

但对有的项目,执行 cargo clippy 会编译失败

多试几次,貌似就可以了…(可能和网络有关吧)

warning: use of deprecated associated function chrono::NaiveDateTime::from_timestamp_opt: use DateTime::from_timestamp instead

这个警告是因为在 Rust 的 chrono 时间库中, chrono::NaiveDateTime::from_timestamp_opt 这个方法已经被弃用了。此方法用于从 Unix 时间戳创建 NaiveDateTime 实例,但现在推荐使用更新的 chrono::DateTime::from_timestamp 方法代替。

解决方式很简单,只需将代码中使用 from_timestamp_opt 的地方改为使用 from_timestamp 即可。具体做法如下:

1 | // 之前的做法 |

新的 from_timestamp 方法与被弃用的 from_timestamp_opt 方法的主要区别在于:

from_timestamp返回DateTime<Utc>类型,而不是NaiveDateTime。DateTime包含时区信息,而NaiveDateTime则不包含。from_timestamp在传入无效时间戳时会 panic,而from_timestamp_opt会返回Option。

如果你需要一个 NaiveDateTime 实例,可以从 DateTime 获取:

1 | use chrono::{DateTime, NaiveDateTime}; |

通过这种方式修改代码,可以消除编译器的弃用警告,并且代码更加现代化。

GPT4:

根据 cargo clippy 的警告,chrono::NaiveDateTime::from_timestamp_opt 函数已被弃用,并建议使用 DateTime::from_timestamp 替代。然而,这里有一个小问题:DateTime::from_timestamp 返回的是一个带时区的 DateTime 对象,而不是一个 NaiveDateTime 对象。这意味着你需要选择一个时区。

假设你希望继续使用 UTC 时区,你可以按照下面的方式修改你的函数:

1 | use chrono::{DateTime, Utc}; |

在这段代码中,我首先使用 NaiveDateTime::from_timestamp 来创建一个 NaiveDateTime 对象,然后使用 DateTime::<Utc>::from_utc 将它转换为一个带 UTC 时区的 DateTime 对象。这样可以保留原有代码的行为,同时避免使用已被弃用的

瞎扯啊…

rust中%a, %d %b %Y %H:%M:%S GMT时间格式是什么?

在Rust的chrono时间库中, %a, %d %b %Y %H:%M:%S GMT 这个时间格式字符串代表以下含义:

%a: 星期的缩写形式,如 “Mon”, “Tue”。%d: 月份的日期,用0补齐的两位数字,范围01-31。%b: 月份的缩写形式,如 “Jan”, “Feb”。%Y: 四位数字表示的年份。%H: 24小时制的小时数,用0补齐的两位数字,范围00-23。%M: 分钟数,用0补齐的两位数字,范围00-59。%S: 秒数,用0补齐的两位数字,范围00-60(60用于闰秒)。GMT: 时区标识符,表示格林威治标准时间。

因此,%a, %d %b %Y %H:%M:%S GMT 这个格式字符串代表的时间格式为:

1 | Wed, 07 Mar 2024 15:09:28 GMT |

它显示了以下信息:

- 星期几(缩写形式)

- 月份的日期

- 月份(缩写形式)

- 年份

- 24小时制的小时数和分钟数

- 秒数

- GMT时区

这种格式通常用于格式化HTTP日期头或其他需要遵循互联网日期/时间格式的场合。它使用英文缩写而不是数字,以方便跨语言环境的阅读和解析。

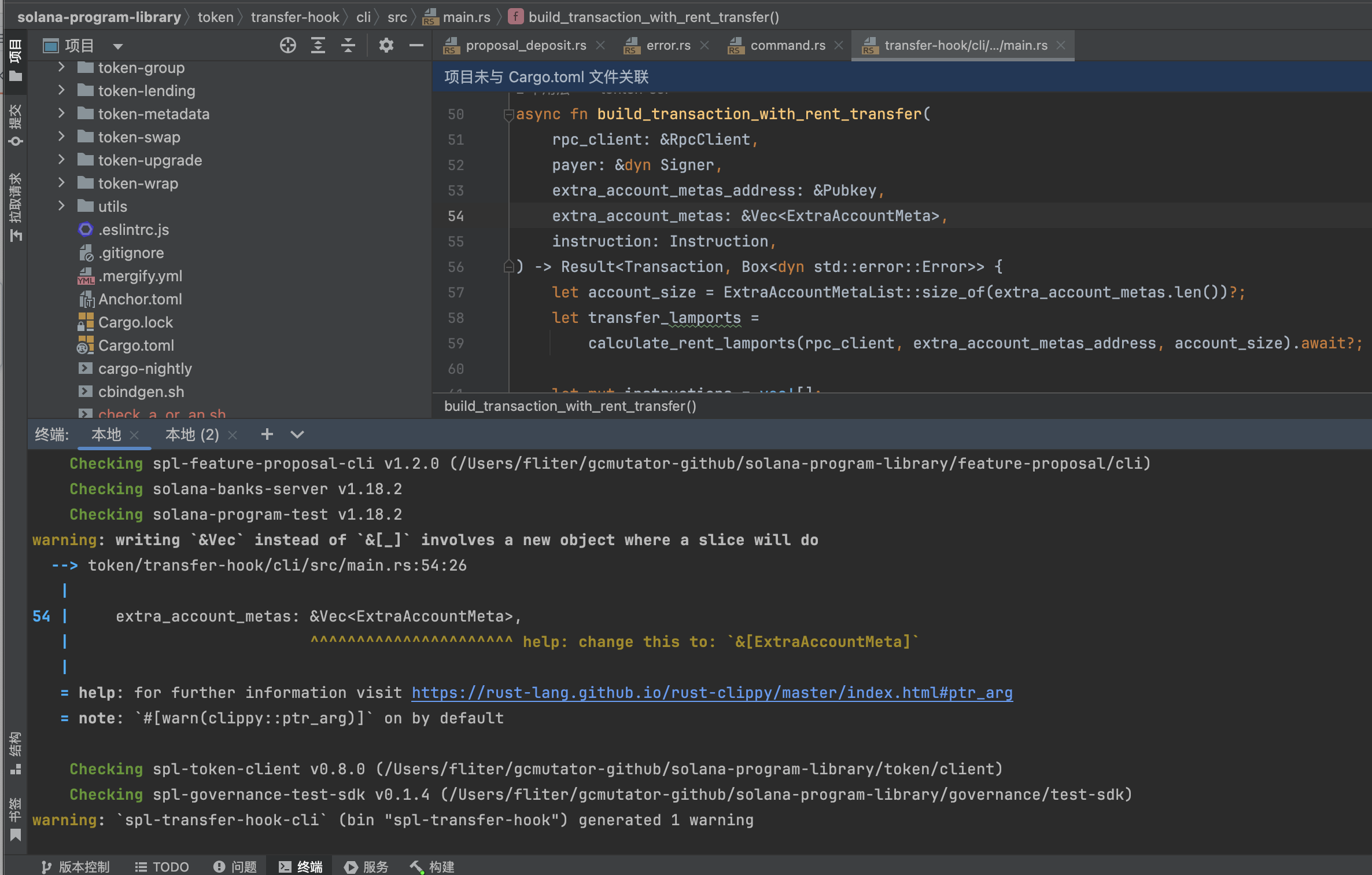

warning: writing &Vec instead of &[_] involves a new object where a slice will do

1 | warning: writing `&Vec` instead of `&[_]` involves a new object where a slice will do |

这个警告信息来自Rust编译器,指出代码中存在一个可能的改进点。具体来说,当你在函数签名或其他地方使用&Vec<T>类型作为参数时,而实际上使用&[T](一个切片的引用)就足够的情况,Rust编译器会给出这个警告。这个警告的主要目的是鼓励更灵活、更通用的代码编写方式。

原因

- 性能和灵活性:

&[T]表示一个借用的切片,它是一个指向连续数据的指针和数据长度的组合。使用&[T]比&Vec<T>更灵活,因为&[T]可以引用Vec<T>的全部或部分数据,也可以引用数组的一部分,或者任何连续存储的T类型的数据。这增加了函数的通用性和复用性。 - 避免不必要的对象创建:

&Vec<T>意味着你正在借用一个Vec<T>对象,而&[T]只是借用一段连续的内存。在某些情况下,使用&[T]可以避免创建新的Vec<T>对象,从而减少内存分配和提升性能。

示例

假设有一个函数打印数字的向量,以下是使用&Vec<T>和&[T]两种方式的对比:

使用

&Vec<T>(触发警告):1

2

3

4

5fn print_numbers(numbers: &Vec<i32>) {

for number in numbers.iter() {

println!("{}", number);

}

}使用

&[T](推荐方式):1

2

3

4

5fn print_numbers(numbers: &[i32]) {

for number in numbers.iter() {

println!("{}", number);

}

}

总结

当你看到这个警告时,考虑将函数签名中的&Vec<T>更改为&[T],以增加代码的灵活性和效率。这种做法遵循了Rust的零成本抽象原则,即尽可能地在不增加运行时开销的情况下提供抽象。

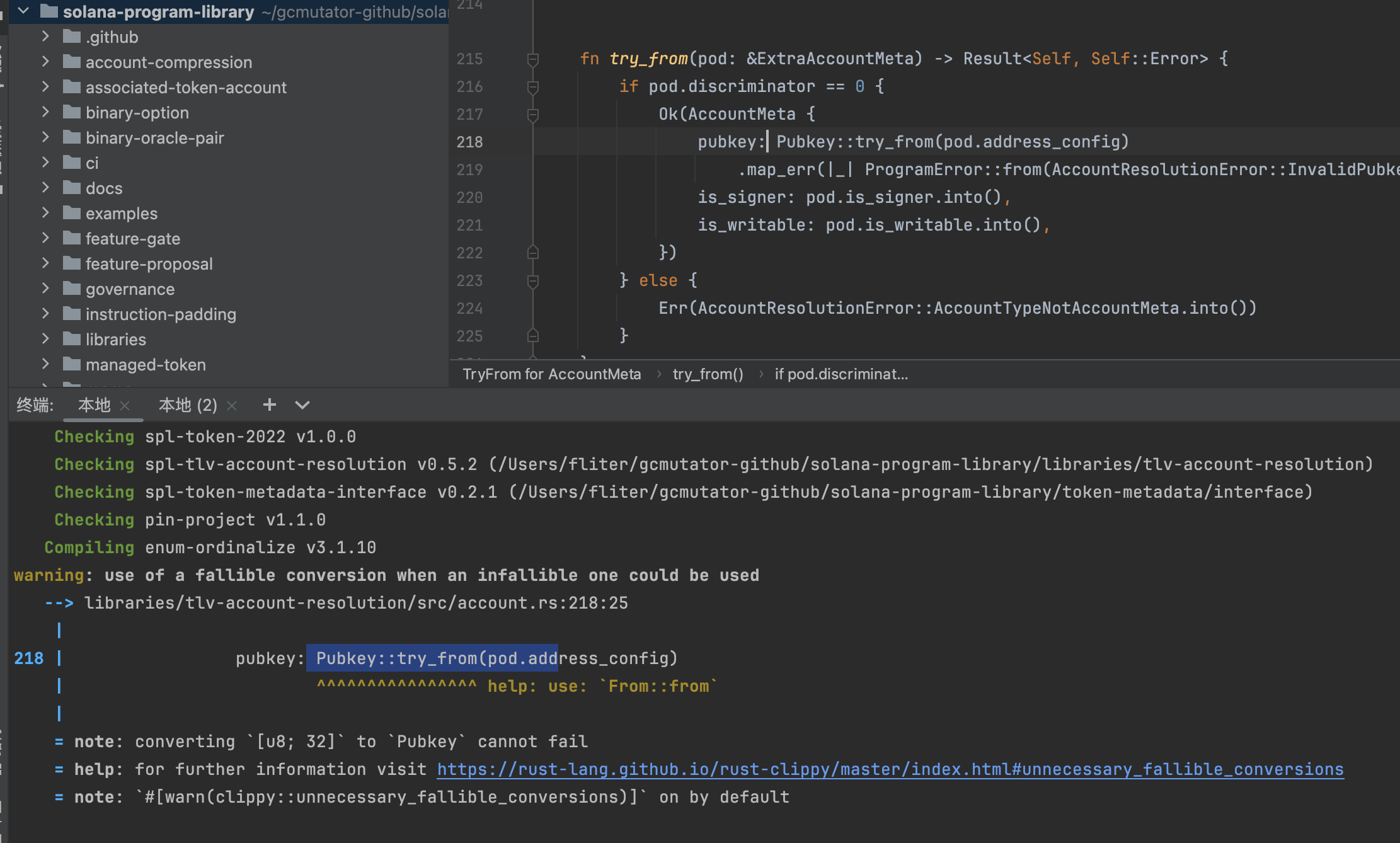

warning: use of a fallible conversion when an infallible one could be used

1 | warning: use of a fallible conversion when an infallible one could be used |

这个警告是由Rust的lint工具Clippy生成的,它指出你在代码中使用了一个可失败的转换(fallible conversion),即try_from,而在这种情况下,转换实际上是不可失败的(infallible)。这意味着你可以使用一个无法失败的转换方法,从而使代码更简洁、更清晰。

原因

在你的代码中,Pubkey::try_from(pod.address_config)尝试将pod.address_config(很可能是一个[u8; 32]类型)转换为Pubkey类型。根据警告信息,这个转换是不可能失败的,因为Pubkey显然可以直接从一个32字节的数组中构造而不会出错。这时使用try_from就显得不必要,因为try_from通常用于那些可能失败的转换,而这里的转换是绝对安全的。

解决方案

你应该使用From::from而不是Pubkey::try_from,因为前者是一个无法失败的转换。这样做可以消除不必要的错误处理代码,使代码更加简洁。

修改前的代码:

1 | pubkey: Pubkey::try_from(pod.address_config) |

修改后的代码:

1 | pubkey: Pubkey::from(pod.address_config) |

这样,你就遵循了Clippy的建议,使用了一个更加合适的转换方法。这不仅消除了警告,还提高了代码的清晰度和可维护性。

额外的说明

当你遇到这样的警告时,总是值得检查为什么一个看似可以失败的操作(如使用try_from)在这个上下文中被认为是不可失败的。这通常是因为类型系统和你的特定用例保证了转换的成功。理解这一点可以帮助你更好地利用Rust的类型系统和标准库提供的各种转换特性。

根据你之前提到的警告信息,转换Pubkey的过程是不可失败的(即,使用try_from是不必要的)。因此,我们可以直接使用From::from来进行转换,这样就不需要显式地处理任何错误了。

下面是改写后的代码:

1 | fn try_from(pod: &ExtraAccountMeta) -> Result<Self, Self::Error> { |

在这个改写版本中,我直接使用了Pubkey::from(pod.address_config)来代替Pubkey::try_from(pod.address_config).map_err(|_| ProgramError::from(AccountResolutionError::InvalidPubkey))?。这样做是基于之前的讨论:由于转换是不可失败的,我们就不需要包装错误处理了。这也意味着,你不需要map_err来转换错误,因为From::from不会产生错误。

这个改写的前提是你确信pubkey: Pubkey::from(pod.address_config)这一操作确实是不可失败的,这通常取决于Pubkey::from的实现和pod.address_config类型是否确实是[u8; 32]或兼容类型。如果Podkey::from对于输入类型是无条件成功的(如,它能处理任何长度为32的字节数组),那么这个改写就是安全的。

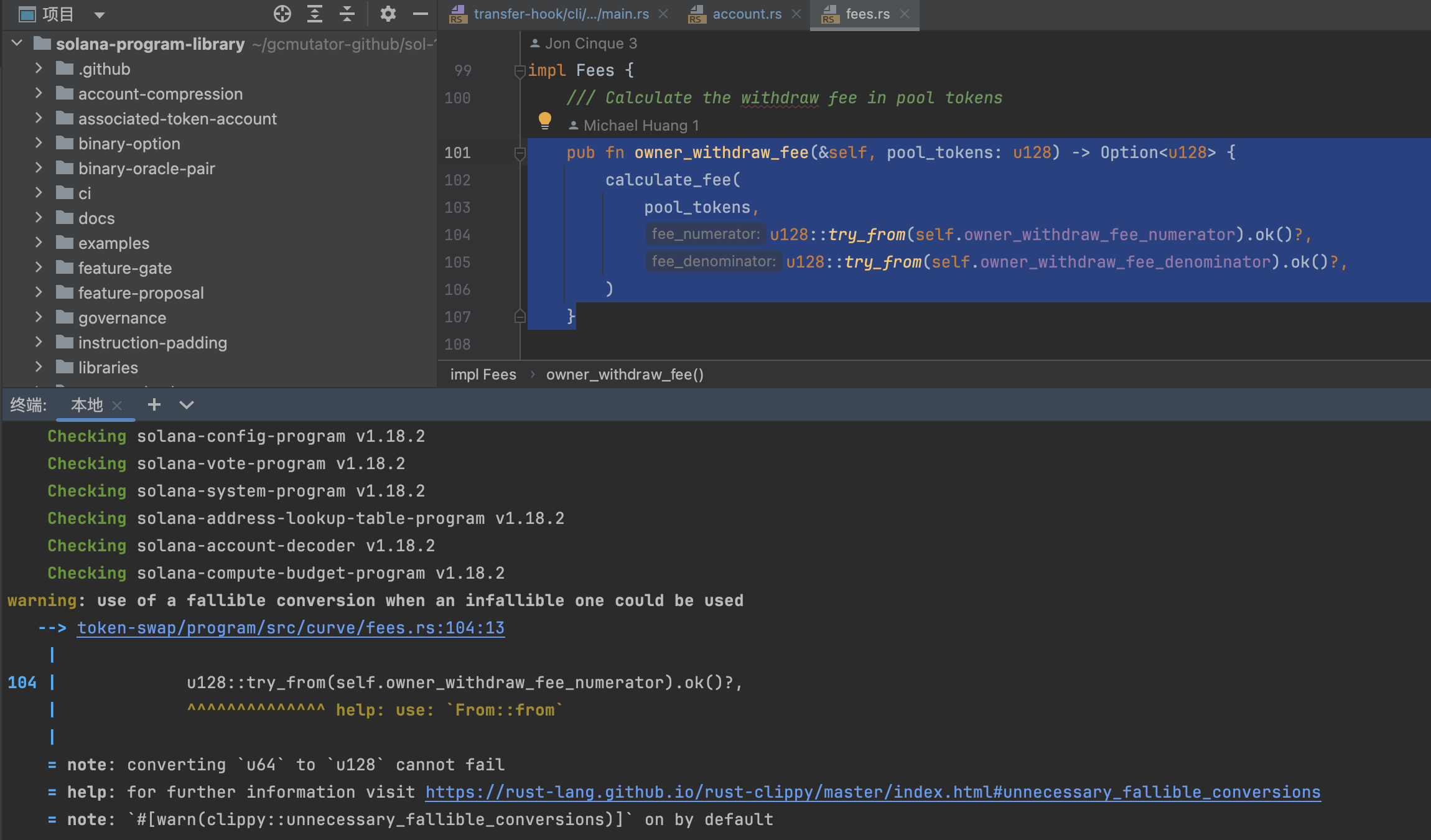

warning: use of a fallible conversion when an infallible one could be used

1 | warning: use of a fallible conversion when an infallible one could be used |

根据Clippy的警告,将u64转换为u128是一个不可失败的操作,因此推荐使用From::from而不是try_from。这是因为u128比u64有更大的表示范围,所以从u64到u128的转换永远不会失败。

你的原始代码使用了u128::try_from(...).ok()?,这里的.ok()?将结果转换为Option<u128>,如果转换失败则提前返回None。但由于这个转换是不可失败的,所以我们可以直接使用From::from进行转换,并且不需要处理任何潜在的错误。

改写后的函数应该像这样:

1 | pub fn owner_withdraw_fee(&self, pool_tokens: u128) -> Option<u128> { |

在这个版本中,我们直接使用u128::from(...)来转换self.owner_withdraw_fee_numerator和self.owner_withdraw_fee_denominator,这两个字段很可能是u64类型。这种方法既清晰又有效率,同时避免了不必要的错误处理代码。

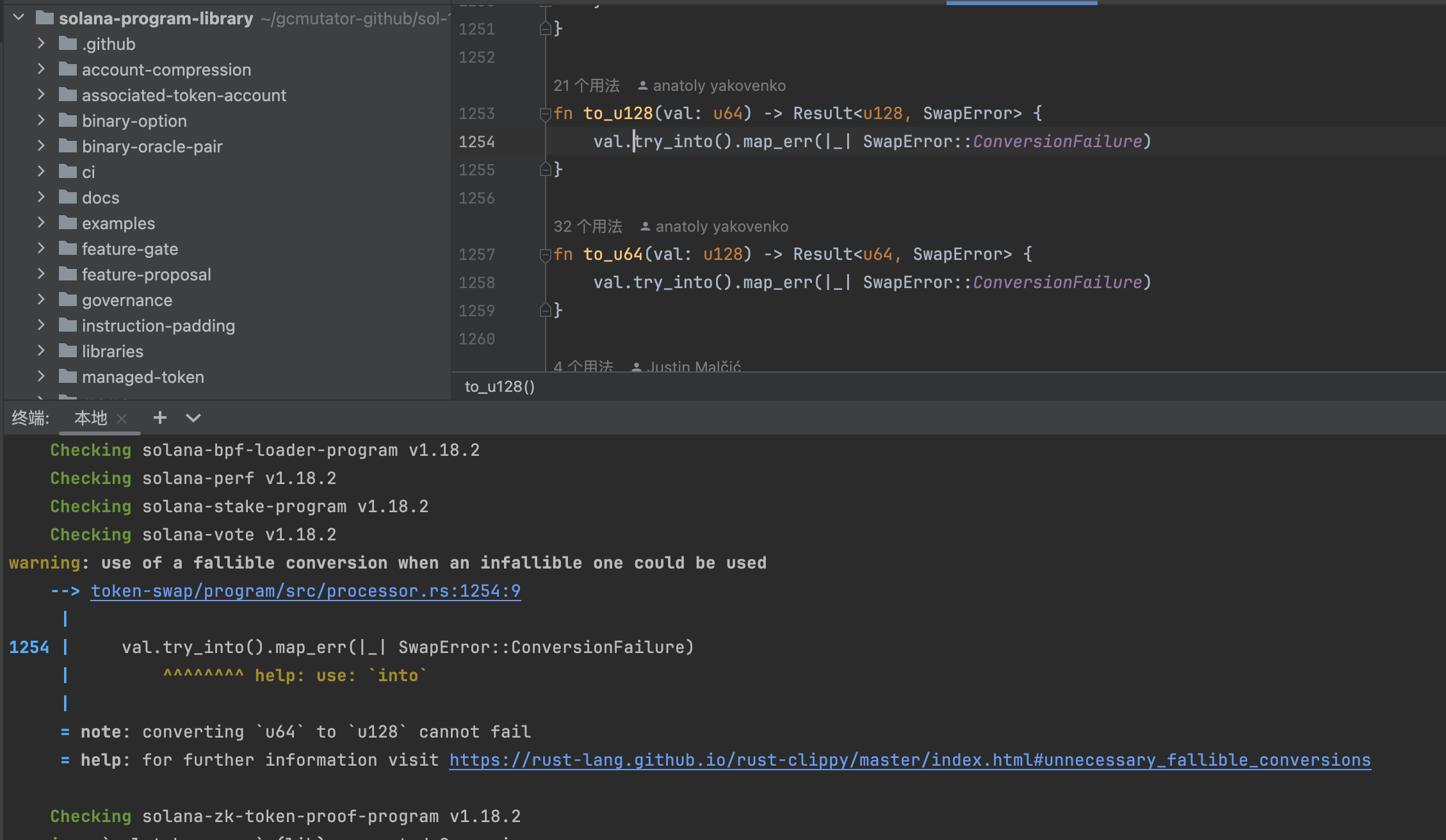

warning: use of a fallible conversion when an infallible one could be used

1 | warning: use of a fallible conversion when an infallible one could be used |

这个警告出现的原因是因为你使用了一个可失败的转换方法(try_into),尝试将u64类型的值转换成u128类型的值。然而,从u64转换到u128实际上是一个不可失败的操作,因为u128的值域比u64大得多,任何u64的值都可以无损地转换为u128。因此,Clippy建议使用不可失败的转换方法,以简化代码并提高其可读性。

解决方案

你可以直接使用into方法替代try_into,这样就不需要错误处理了。以下是改进后的函数:

1 | fn to_u128(val: u64) -> u128 { |

注意,由于转换不会失败,函数的返回类型也从Result<u128, SwapError>更改为了u128。这样,你就不需要使用map_err来处理任何错误了,因为根本不会有错误发生。

这种改动不仅消除了Clippy的警告,也让代码更加简洁明了。

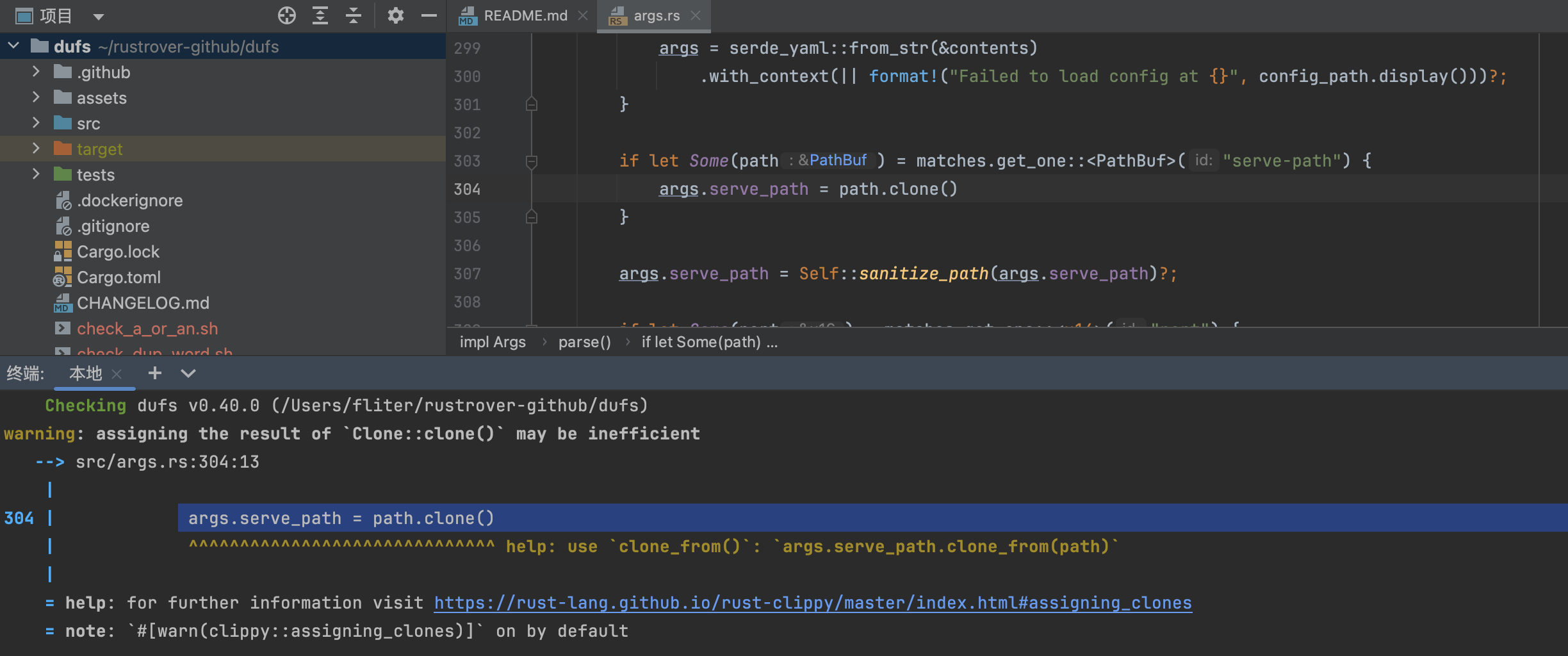

1 | warning: assigning the result of `Clone::clone()` may be inefficient |

Clippy的这个警告提示你,直接使用clone()来赋值可能是低效的。这是因为clone()方法会创建一个新的实例,并将其赋值给目标变量,这个过程可能包括分配新的内存。当目标变量已经是相同类型的实例时,使用clone_from()方法可以更高效,因为它可以重用目标实例的已有资源(如内存),减少资源分配的需要。

解决方案

根据Clippy的建议,你应该使用clone_from()而不是clone()。以下是改进后的代码:

1 | if let Some(path) = matches.get_one::<PathBuf>("serve-path") { |

在这里,args.serve_path.clone_from(path)会直接在args.serve_path上调用clone_from(),将path的值复制到args.serve_path中,而不需要创建一个新的PathBuf实例。这样,如果args.serve_path已经分配了足够的内存来存储新的值,它就可以重用这些内存,从而提高效率。

请注意,clone_from()方法的效率提升取决于具体类型和上下文。在一些情况下,当原始变量几乎没有预分配的空间或可以重用的资源时,使用clone_from()与clone()相比可能不会带来显著的性能改进。然而,遵循Clippy的建议通常是个好习惯,特别是在性能敏感的应用中,因为这可能会在复杂数据结构或大型集合的情况下带来优势。

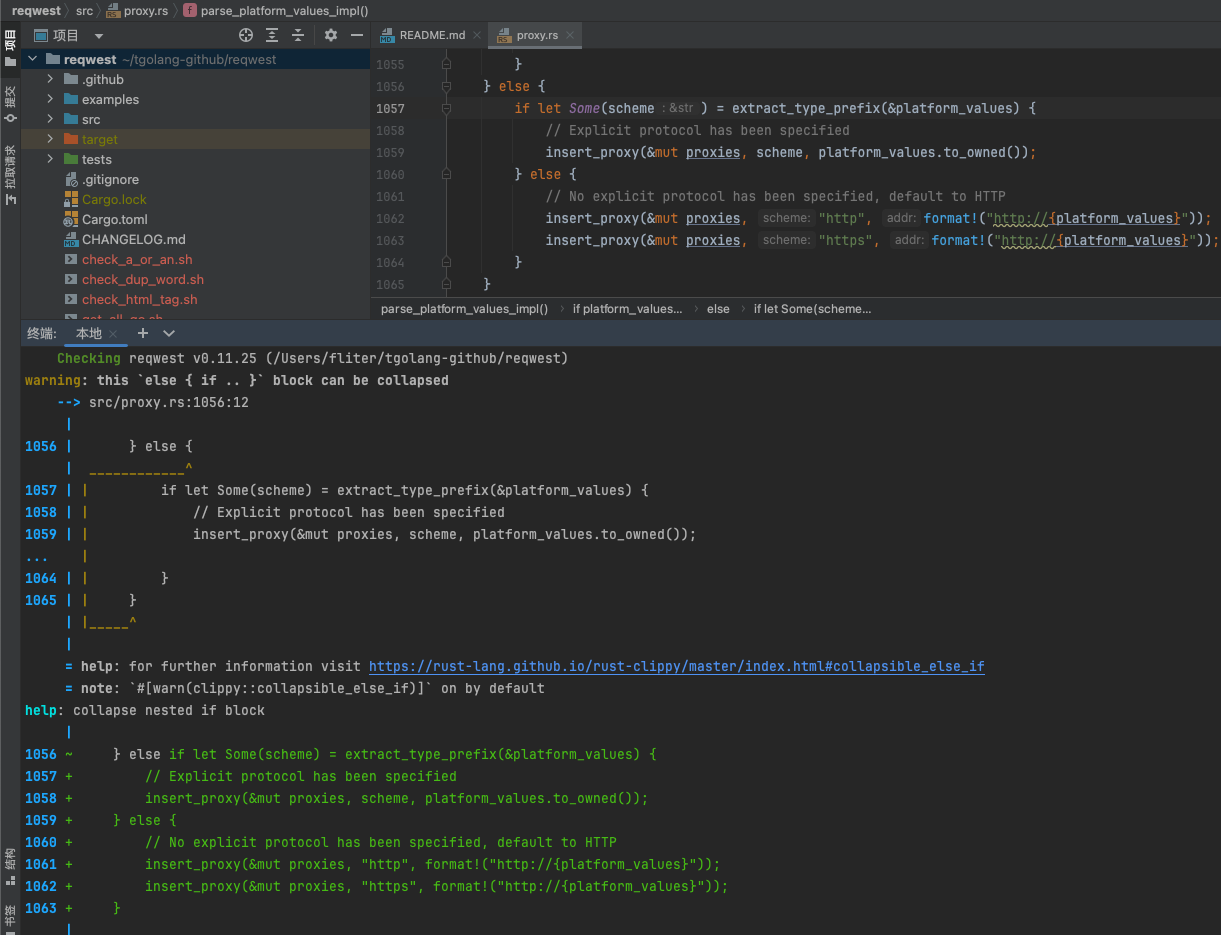

warning: this else { if .. } block can be collapsed

1 | warning: this `else { if .. }` block can be collapsed |

warning: this lifetime isn’t used in the impl

1 | warning: this lifetime isn't used in the impl |

这个警告意味着在你的impl块中定义的生命周期'a并没有在实现的方法或关联类型中使用。Rust生命周期参数(如'a)的主要用途是为了标注引用的生命周期,以确保数据的使用是安全的。如果一个生命周期参数在实现中没有被用到,那么它就是多余的。

在你提供的代码中,impl<'a> IntoUrlSealed for String这部分声明了一个生命周期'a,但是在impl块的方法定义中并没有使用这个生命周期。这通常是因为String类型拥有它自己的数据,而不是借用外部数据,所以在这个上下文中使用生命周期是不必要的。

如何修改

你可以简单地移除生命周期注解,因为String类型的方法并不需要生命周期参数。修改后的代码如下:

1 | impl IntoUrlSealed for String { |

这样修改后,代码仍然能够正常工作,且不会触发clippy的警告。此修改保留了原有的功能,同时去掉了不必要的生命周期声明,使得代码更简洁、更清晰。

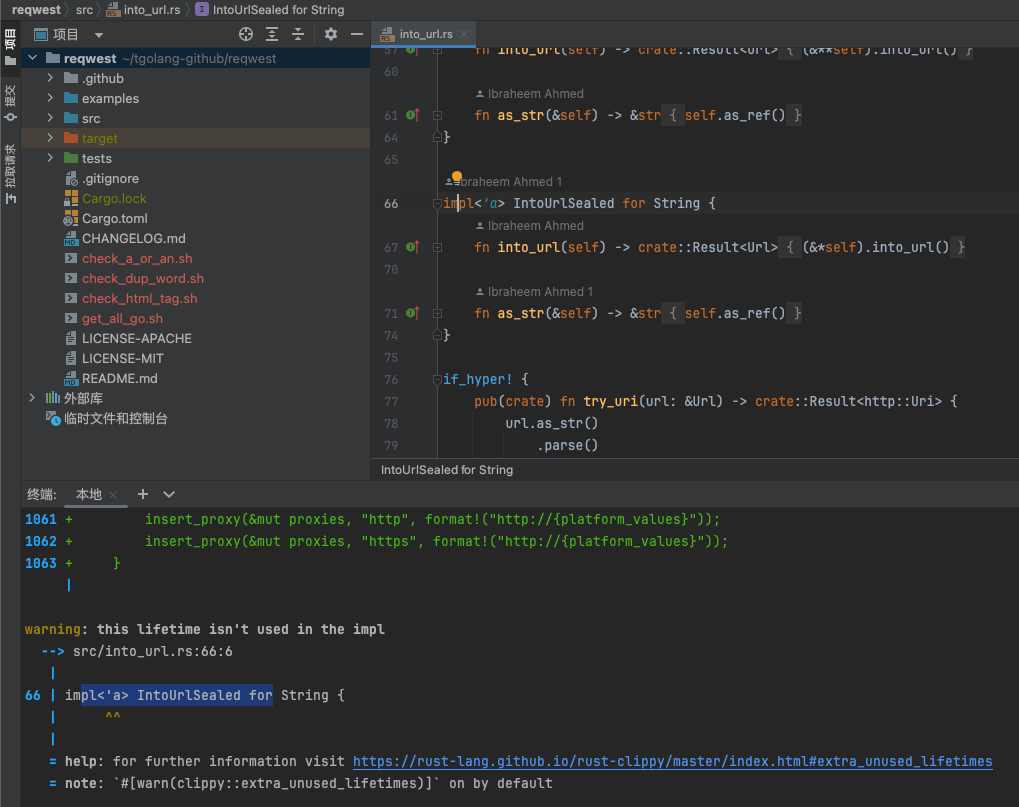

warning: this impl can be derived

1 | warning: this `impl` can be derived |

这个警告意味着你的impl Default可以通过Rust的自动派生(derive)功能来实现,而不是手动实现它。这样做可以简化代码,使其更加清晰易读。当Clippy提示这种类型的警告时,它通常建议使用#[derive(Default)]来自动生成Default的实现,前提是所有结构体字段本身也都实现了Default。

但在你的例子中,由于你使用了条件编译指令(#[cfg(feature = "gzip")]等),直接派生Default可能不会按预期工作,因为这些字段的默认值依赖于编译时的特性标志。

如何修改

你的情况稍微复杂一些,因为你想根据不同的编译特性来设置不同的字段值。如果所有的字段都实现了Default,且默认值都是true,你可以考虑修改结构体,为每个字段提供默认实现,并可能使用#[derive(Default)]。但是,由于使用了条件编译特性,每个字段的默认值只有在相应的特性被启用时才为true,这使得直接使用derive不太可行。

如果你确定需要根据不同的特性标志来条件编译字段,保持现有的手动实现可能是必要的,但是可以优化实现以避免警告。例如,你可以为结构体中的每个字段实现Default,让每个字段根据是否启用了相应的特性来决定它的默认值。

但根据你提供的代码和Clippy的警告,如果每个字段默认都是true,并且希望简化代码,你可能需要重新考虑设计。如果条件编译的逻辑对默认值有实际影响,手动实现Default可能是有意义的,但要注意解释为什么不能简单地使用derive。

最后,如果你确定手动实现是必需的(可能由于条件编译),并且想消除警告,考虑禁用该Clippy警告(如果这是在你控制范围内的话)。可以在文件顶部添加以下属性以忽略特定的Clippy警告:

1 |

这会禁用当前文件中的derivable_impls警告。然而,最好的做法是仔细评估是否真的需要手动实现Default,或者是否可以通过修改代码结构来利用Rust的derive功能。

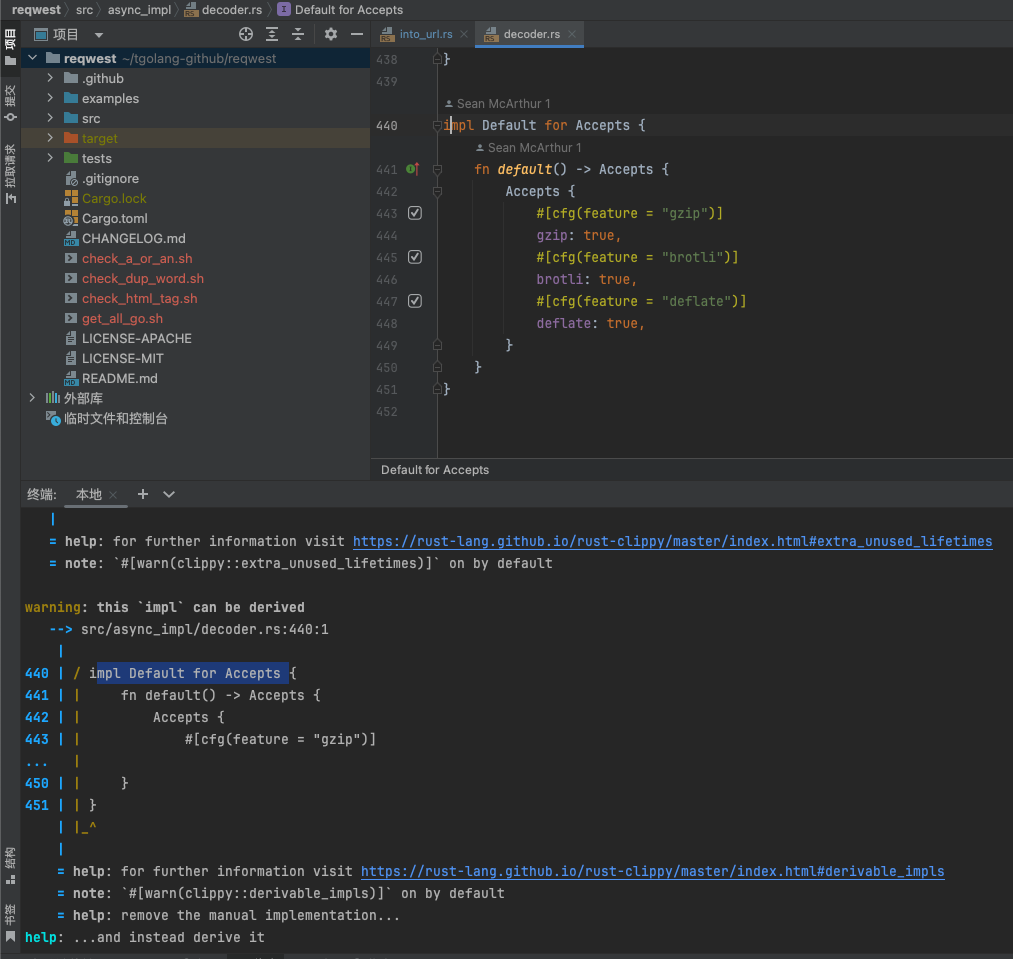

warning: manual Range::contains implementation

1 | warning: manual `Range::contains` implementation |

这个警告是Rust的静态代码分析工具Clippy提示的,它建议使用Range::contains方法来简化代码。当你手动检查一个值是否位于两个值之间时,使用Range::contains可以使代码更简洁、易读。

在你的例子中,你正在检查变量c是否大于或等于0x20且小于0x7f。Clippy建议使用范围对象(0x20..0x7f)的contains方法来替代手动的比较。

原代码

1 | } else if c >= 0x20 && c < 0x7f { |

修改后的代码

1 | } else if (0x20..0x7f).contains(&c) { |

这样修改后,你的代码会变得更加简洁和符合Rust的惯用法。contains方法会检查指定的范围是否包含了给定的值。请注意,这里的范围是左闭右开的,即包括起始值0x20,不包括结束值0x7f,与原始条件逻辑一致。

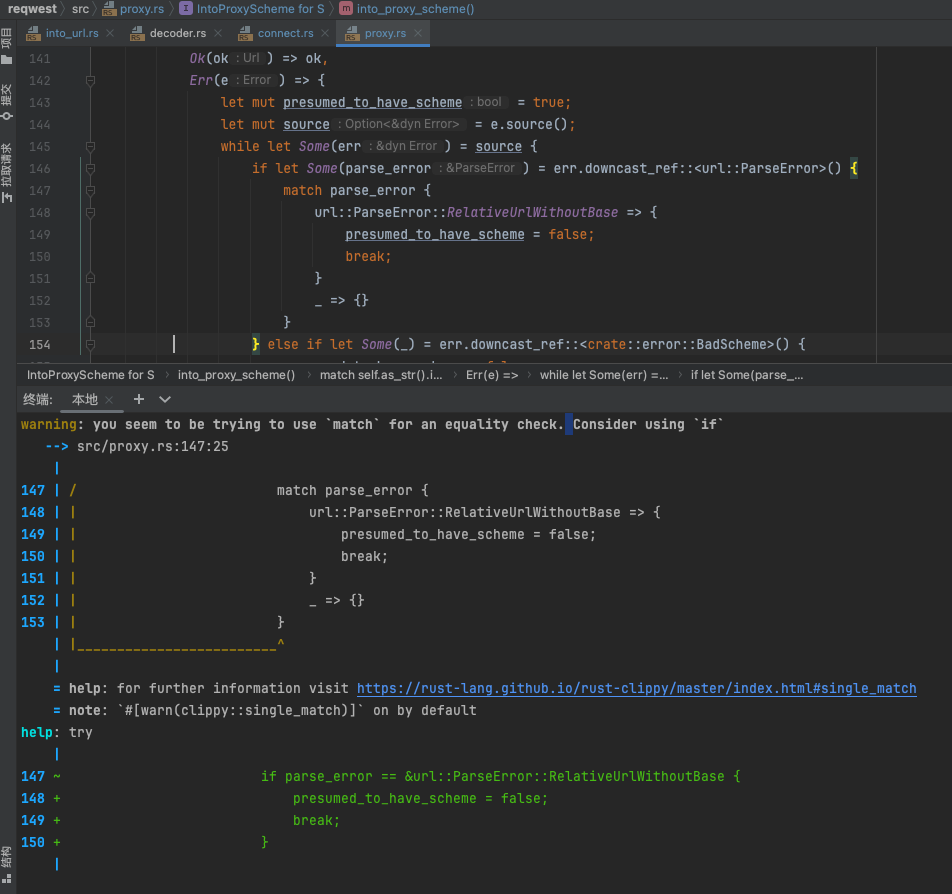

warning: you seem to be trying to use match for an equality check. Consider using if

1 | warning: you seem to be trying to use `match` for an equality check. Consider using `if` |

这个警告指出,你的match表达式实际上只用于检查一个条件(即parse_error是否等于url::ParseError::RelativeUrlWithoutBase),而这种情况下使用if语句通常更为清晰和简洁。Clippy推荐将match表达式替换为if语句进行等值比较。

要解决这个警告,你应该按照Clippy的建议修改代码。假设parse_error是通过某种方式得到的url::ParseError类型的变量,你可以这样重写:

原始代码

1 | match parse_error { |

修改后的代码

1 | if parse_error == url::ParseError::RelativeUrlWithoutBase { |

请注意,由于url::ParseError::RelativeUrlWithoutBase是一个枚举变体,这种直接的比较要求ParseError实现了PartialEq trait。幸运的是,大多数Rust枚举,尤其是标准库和流行crate中的枚举,通常都实现了这个trait。如果你遇到了没有实现PartialEq的情况,可能需要考虑其他方法来比较,但这在实践中很少见。

使用if语句不仅可以满足Clippy的建议,提高代码清晰度,也使得代码逻辑更直接、易于理解。

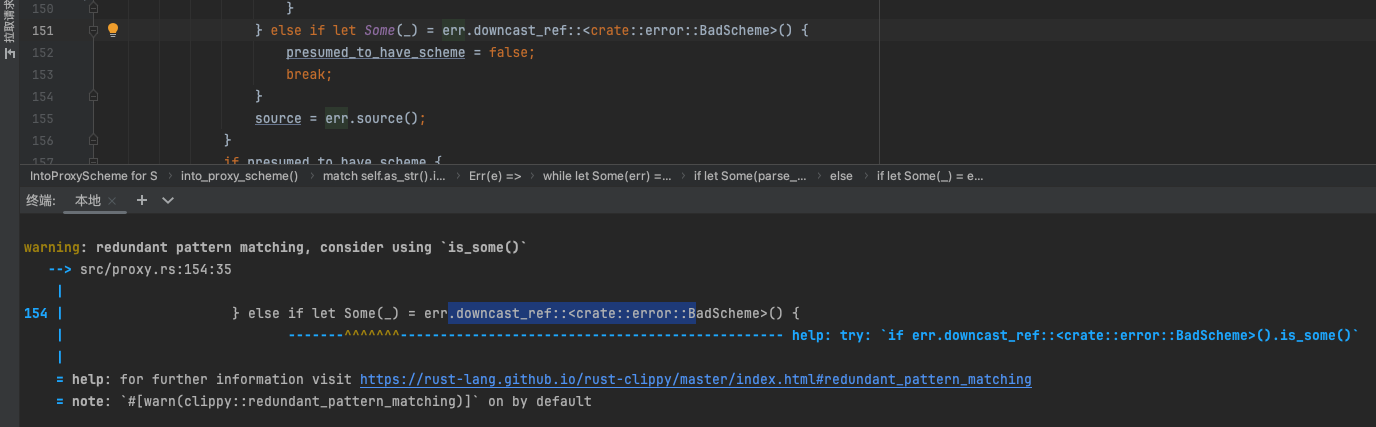

warning: redundant pattern matching, consider using is_some()

1 | warning: redundant pattern matching, consider using `is_some()` |

这个警告意味着你在使用if let来检查Option是否是Some值时,实际上并不关心这个Some中的值。在这种情况下,使用is_some()方法会更加直接和简洁。

原始代码

1 | } else if let Some(_) = err.downcast_ref::<crate::error::BadScheme>() { |

修改后的代码

1 | if err.downcast_ref::<crate::error::BadScheme>().is_some() { |

这种修改使得代码更加简洁和直接。通过直接调用is_some()方法,你可以检查Option是否包含一个值,而不用编写用于匹配并忽略该值的模式。这种方法提高了代码的可读性,并且更符合Rust的惯用法。

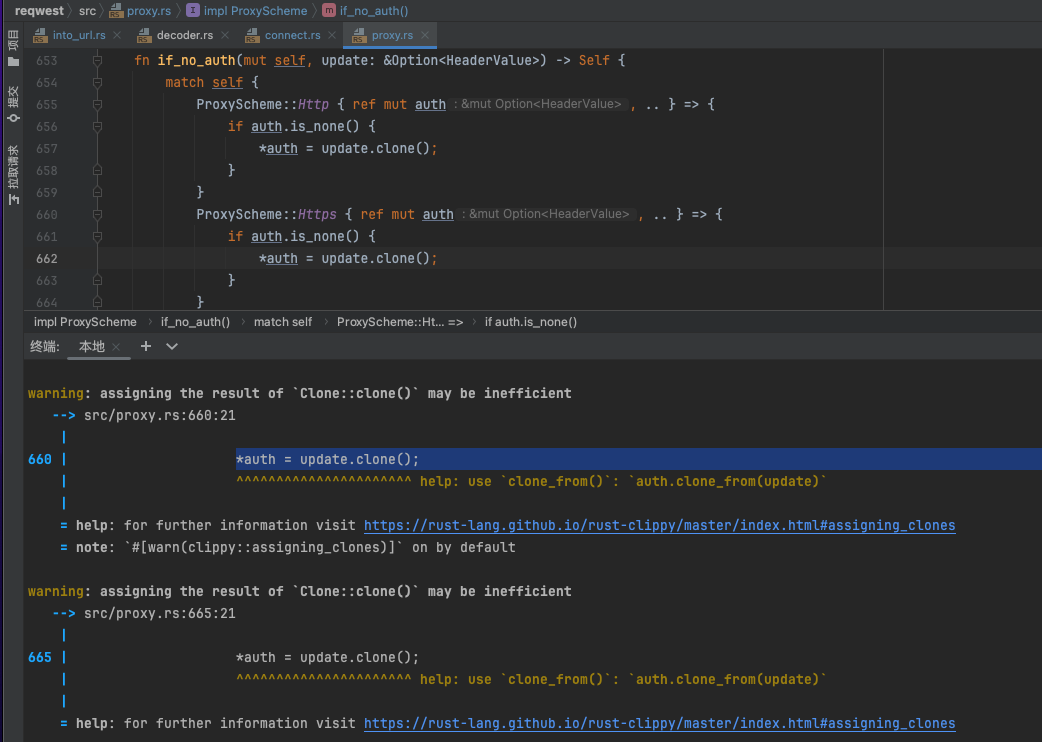

warning: assigning the result of Clone::clone() may be inefficient

1 | warning: assigning the result of `Clone::clone()` may be inefficient |

这个警告的意思是,在你的代码中直接使用clone()方法并将其结果分配给一个变量可能是低效的。Rust的Clippy工具推荐使用clone_from()方法来提高效率。clone_from()方法会将目标变量直接更新为源变量的克隆,这通常比创建一个新的克隆然后分配它更有效率,尤其是在涉及到大型数据结构时。

原始代码

1 | *auth = update.clone(); |

修改后的代码

1 | auth.clone_from(update); |

通过这种方式修改你的if_no_auth函数中相关的代码,可以提高代码的效率。修改后,你的函数可能看起来像这样:

1 | fn if_no_auth(mut self, update: &Option<HeaderValue>) -> Self { |

通过使用clone_from(),当update是Some值时,auth会被更新为update的克隆,如果update是None,则auth也会被设置为None。这种方式避免了不必要的分配和潜在的效率低下问题。

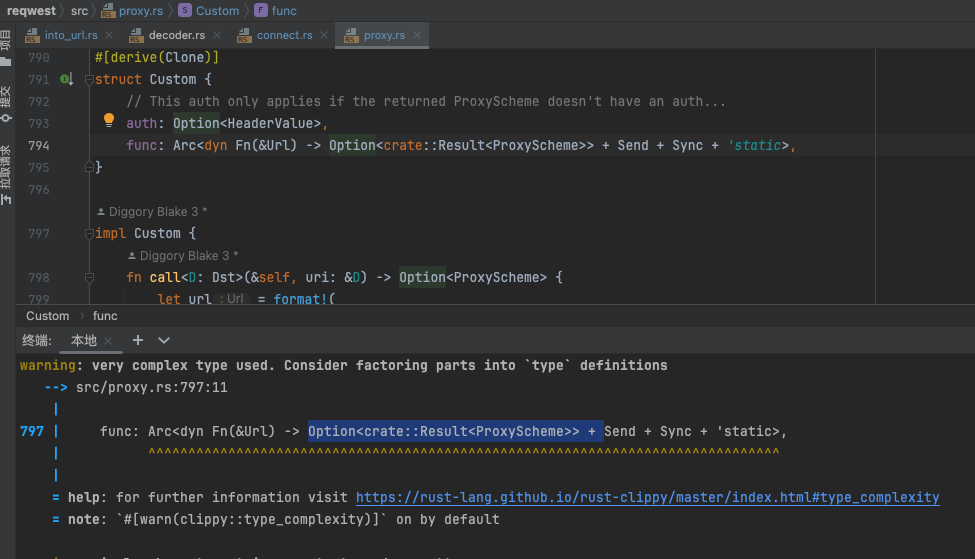

warning: very complex type used. Consider factoring parts into type definitions

1 | warning: very complex type used. Consider factoring parts into `type` definitions |

这个警告意味着你在struct定义中使用了一个非常复杂的类型。Clippy建议将复杂的类型分解为更简单的type定义,以提高代码的可读性和可维护性。

原始代码

1 | struct Custom { |

解决方案

你可以通过定义一个新的类型别名来简化func字段的类型定义。这样做可以使代码更清晰,同时保持类型复杂性的封装。

1 | type ProxySchemeFunc = Arc<dyn Fn(&Url) -> Option<crate::Result<ProxyScheme>> + Send + Sync + 'static>; |

这种方式定义了一个新的type,名为ProxySchemeFunc,它是原先复杂类型的别名。这样,Custom结构体就变得更加清晰易读,同时仍然保留了原有的功能。通过这种重构,你可以更容易地理解和使用Custom结构体,特别是在涉及到复杂类型的情况下。

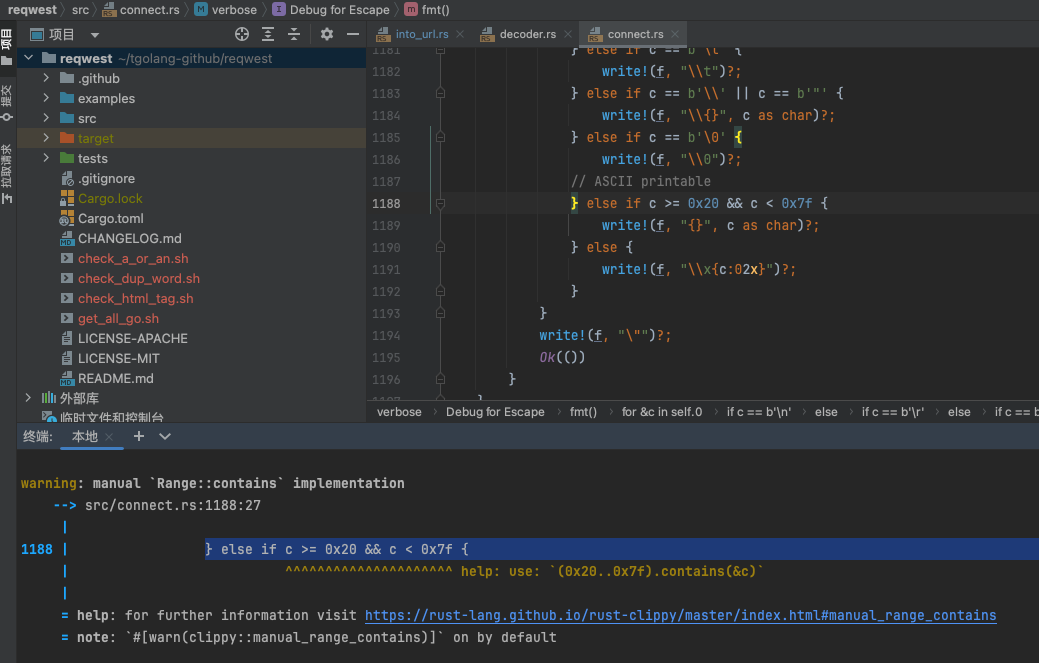

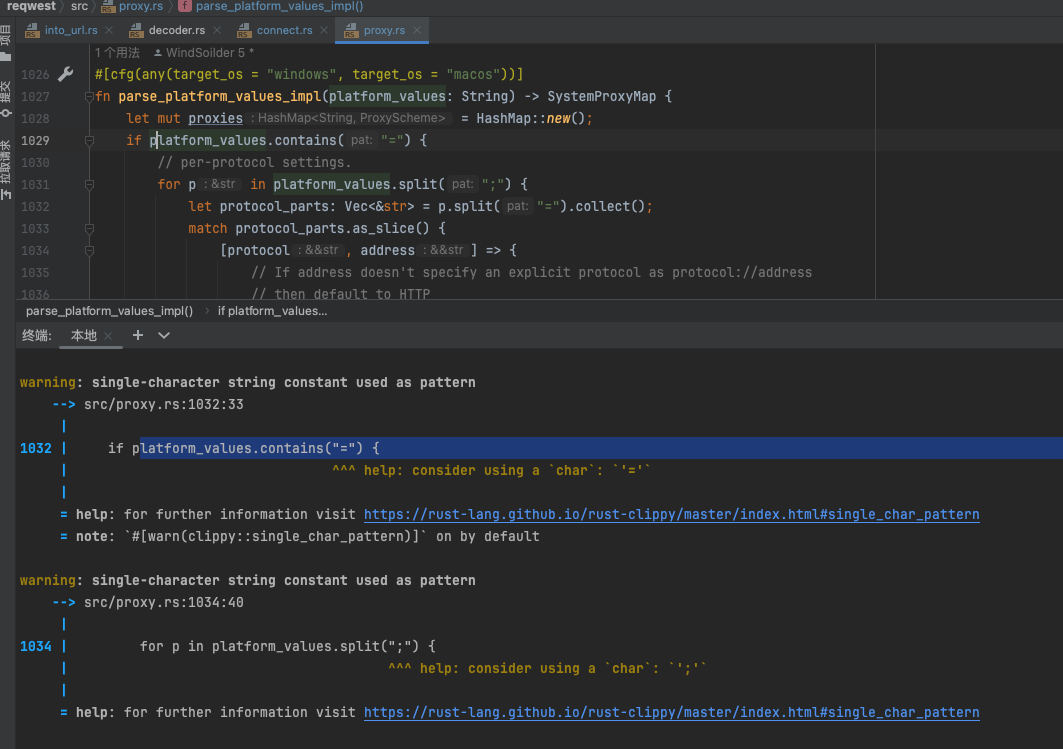

warning: single-character string constant used as pattern

1 | warning: single-character string constant used as pattern |

这个警告指出,你在使用.contains()方法时用了一个单字符的字符串常量作为搜索模式。Clippy建议,当搜索模式是单个字符时,使用字符类型(char)而不是字符串类型(&str),因为使用字符在性能上更优。

原始代码

1 | if platform_values.contains("=") { |

修改后的代码

1 | if platform_values.contains('=') { |

通过将"="改为'=',你使用了字符字面量而不是字符串字面量。这种修改可以提升性能,因为它避免了字符串模式匹配的一些额外开销,特别是在进行简单的字符查找时。

warning: the item Iterator is imported redundantly

1 | warning: the item `Iterator` is imported redundantly |

这个警告信息是Rust编译器提供的,指出在你的Rust代码中有一个不必要的导入(unused import)。具体来说,你在src/util/min_max.rs文件的第一行中显式导入了std::iter::Iterator,但这其实是冗余的,因为Rust的预导入模块(the prelude)已经包含了Iterator。

Rust的预导入模块自动导入了一些最常用的trait和类型,以方便开发者使用,而无需每次都显式导入。Iterator trait就是其中之一。因此,当你显式地导入Iterator时,编译器会告诉你这是冗余的,因为它已经通过预导入模块可用了。

这个警告本身不会阻止你的代码编译或运行,但它指示你可以简化你的代码。解决这个警告的方法是删除use std::iter::Iterator;这行代码。这样做不会影响你的代码功能,但会使代码更干净,遵循Rust的最佳实践。

编译器还提示说,这个警告是在#[warn(unused_imports)]这个属性下产生的,默认情况下这个属性是启用的,旨在帮助开发者识别和移除代码中未使用的导入,从而保持代码的整洁。

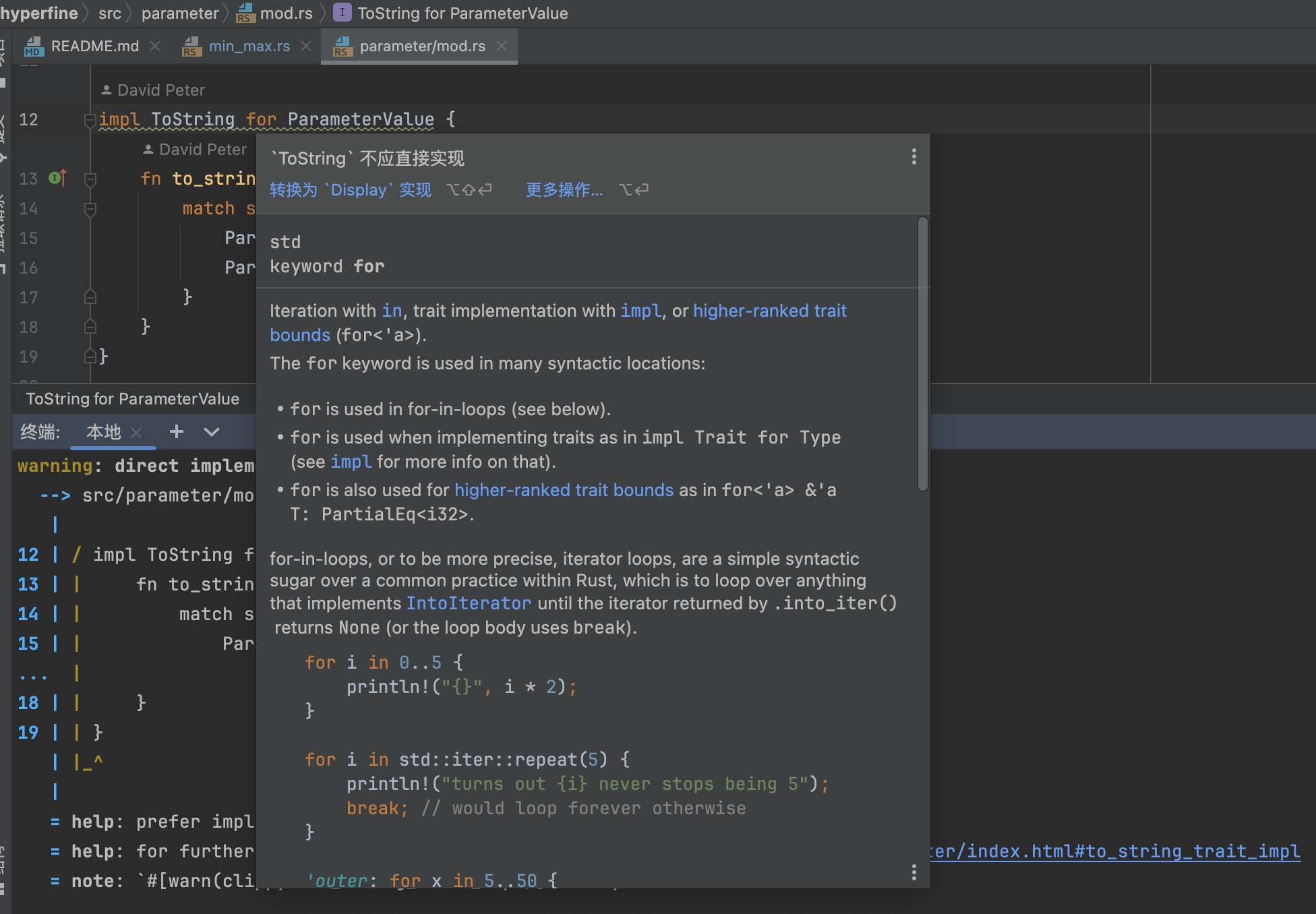

warning: direct implementation of ToString

1 | warning: direct implementation of `ToString` |

这个警告是由Rust的lint工具Clippy发出的,指出你直接为ParameterValue类型实现了ToString trait,而更推荐的做法是实现Display trait。在Rust中,ToString trait能够让任何实现了Display的类型自动拥有,因此直接实现Display通常是更好的选择。这样做不仅可以让你的类型支持转换为字符串,而且还能让它通过{}格式化参数在打印等场合使用。

解释

当你为一个类型实现了Display trait,ToString trait会通过Display自动实现,因此你无需直接实现ToString。这样做的好处是代码更简洁且更符合Rust的惯用法。

如何解决

要解决这个警告,你需要将ToString实现替换为Display的实现。基于你提供的代码片段,下面是一个示例修改:

1 | use std::fmt; |

这里,我们使用std::fmt模块中的Display和Formatter,以及fmt::Result。write!宏用于向提供的formatter写入格式化的字符串。这与你原来为ToString实现的to_string方法中使用的逻辑相似,只不过这次是实现Display trait,并通过write!宏输出。

将ToString替换为Display之后,你的类型依旧可以使用.to_string()方法,因为Rust标准库会为所有实现了Display的类型自动提供ToString的实现。这样,你不仅遵循了Rust的最佳实践,还保留了将类型转换为字符串的功能。

warning: the item ignore is imported redundantly

1 | warning: the item `ignore` is imported redundantly |

这个警告意味着在你的Rust代码中,通过use ignore::{self, WalkBuilder, WalkParallel, WalkState};这行代码进行的ignore模块导入是多余的。具体来说,当你写ignore::{self, ...}时,self关键字代表ignore模块本身。但在这种情况下,Rust编译器指出ignore模块已经通过其他途径(比如Rust的预导入机制,即prelude)被导入到当前作用域中了,或者在这个上下文中,ignore可能直接就是当前包(crate)的一部分。

解释

在Rust中,预导入(prelude)是一个包含了Rust标准库中多数常用功能的小集合,这个集合自动被每个Rust程序导入,以减少需要手动导入的常用类型和trait的数量。然而,通常来说,ignore库并不在预导入中,所以这个警告可能是由于你的项目结构或模块的特定设置导致的。

如何解决

你可以通过移除对ignore模块的显式自引用来解决这个问题。如果ignore是你的代码中直接使用的外部crate,那么这个警告可能是不正确的——这种情况下,它不会被预导入机制导入,除非有特殊的项目配置导致这种行为。

为了解决这个警告,你应该检查是否真的需要在这里显式引用ignore模块本身。如果你的代码中没有直接使用ignore模块(比如通过ignore::something()这样的方式调用),那么你可以安全地移除self:

1 | use ignore::{WalkBuilder, WalkParallel, WalkState}; |

如果这个警告是误报(可能由于特定的项目设置或其他原因),并且你确实需要这样的导入语句,考虑检查项目的配置或查看Rust社区的最佳实践和指南,看看是否有推荐的处理方式。然而,大多数情况下,简单地移除冗余的部分并根据编译器的指导进行代码调整即可解决这类警告。

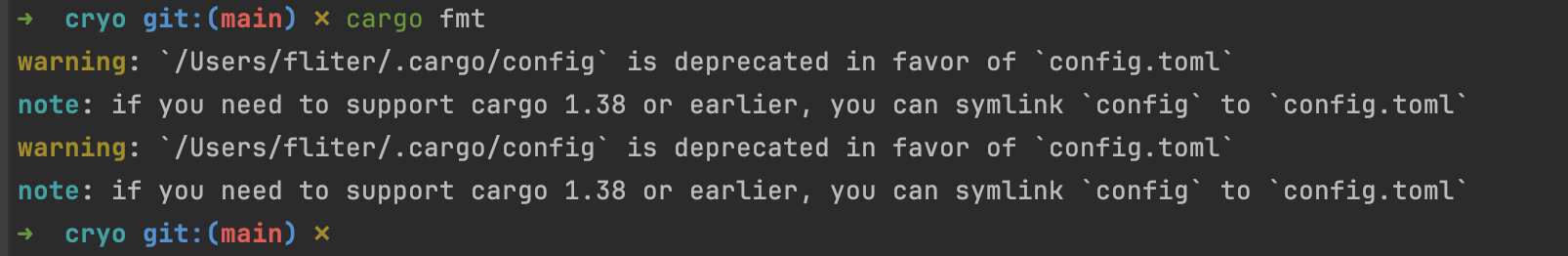

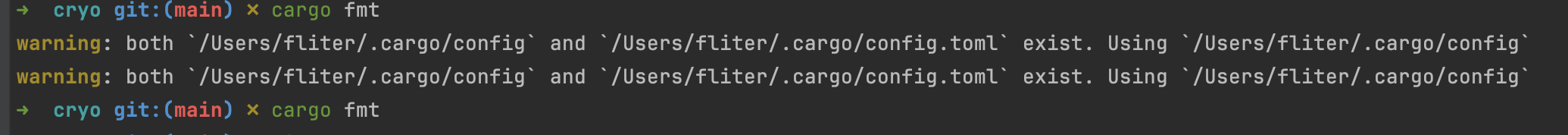

cargo fmt

1 | warning: `/Users/fliter/.cargo/config` is deprecated in favor of `config.toml` |

这个警告信息是指在Rust的包管理器Cargo中,旧版本的配置文件.cargo/config已经被弃用,取而代之的是新的config.toml文件。

具体来说:

警告信息说明你的用户根目录

/Users/fliter下有一个.cargo/config的旧配置文件,这种格式已被废弃。新版Cargo使用的是

config.toml文件,这是一种更结构化、可读性更好的TOML格式配置文件。注意部分提醒,如果你需要兼容旧版本的Cargo(1.38或更早),可以创建一个符号链接,将

config链接到config.toml文件。

为了解决这个警告,你有两个选择:

创建一个新的

config.toml文件,通常位于~/.cargo/config.toml或项目根目录下。将原来config文件中的配置迁移到新文件。如果确实需要兼容旧版Cargo,可以创建符号链接:

1 | ln -s ~/.cargo/config.toml ~/.cargo/config |

这条命令在.cargo目录下创建一个名为config的符号链接,指向config.toml文件。

采取以上措施之后,下次使用Cargo就不会再看到这个警告了。

删除掉.cargo/config就行了

cargo fmt 和cargo fmt –check有何区别?

cargo fmt 和 cargo fmt --check 都是用于格式化 Rust 代码的命令,但它们有一些区别:

- 格式化行为

cargo fmt 会直接对源代码文件进行格式化,将代码格式调整到符合 Rust 官方代码风格指南。这个命令会直接修改源文件。

cargo fmt --check 则不会修改源文件,只会检查代码是否符合代码风格指南。如果代码格式有问题,它会输出需要修改的地方,但不会直接修改文件。

- 退出码

cargo fmt 在格式化完成后总是会返回 0 作为退出码。

cargo fmt --check 如果代码格式有问题,会返回非零的退出码,通常是 1。如果代码格式没有问题,则返回 0。这使得 cargo fmt --check 可以被用于持续集成环境中,检查代码格式并在格式有问题时中止构建过程。

- 输出

cargo fmt 在格式化代码时不会产生太多输出,除非发生错误。

cargo fmt --check 会输出需要格式化的代码行以及对应的问题,方便开发人员定位并修复。

总的来说,cargo fmt 用于直接格式化代码,而 cargo fmt --check 用于检查代码格式,并且更适合在持续集成环境中使用。大多数情况下,开发人员会先运行 cargo fmt --check 检查代码格式,解决所有格式问题后,再运行 cargo fmt 对代码进行实际格式化。

Improve Your Code With Linting

编写干净、惯用的代码并不容易。你需要考虑风格、约定、代码复杂性、性能等诸多因素。当一组开发者在同一个项目上工作时,问题变得更加复杂。幸运的是,我们有一个工具可以帮助我们写出更好的代码 — 我说的是代码检查工具(linters)。代码检查工具可以自动检查代码的风格、正确性、复杂性、代码气味等。在今天的视频中,我们将介绍如何利用代码检查来改善你的Rust代码。

在我们开始之前,请确保通过访问letsgetrusty.com/cheatsheet获取你的免费Rust速查表。说完这些,我的Rust同事Tommy将为大家解释如何在Rust中使用代码检查。

谢谢Bogdan。Rust编译器(称为rustc)内置了一系列代码检查,这些检查会在编译过程中进行评估。你可以通过运行”rustc -W help”或参考rustc手册来查看完整的检查列表。此外,这些检查被整合成几个组,我们稍后会在视频中讨论。

每个检查都被设置为一个级别。可用的级别有:allow(允许)、warn(警告)、force-warn(强制警告)、deny(拒绝)和forbid(禁止)。

- allow告诉检查工具忽略违反该检查的情况。

- warn告诉检查工具在违反该检查时产生警告。

- force-warn与warn相同,但不能被覆盖。

- deny告诉检查工具在违反该检查时产生错误。

- forbid与deny相同,但不能被覆盖。

让我们看看这些检查如何影响你的代码。这里有一个简单的Rust程序示例:我们有一个名为Point的结构体和一个名为get_random_point的函数。在main函数中,我们创建两个随机点并打印其中一个。

要运行rustc检查工具,我们可以编译程序或运行cargo check。运行cargo check后,我们看到三个警告:一个是关于未使用的变量bar,违反了unused_variables检查;一个是关于Point结构体中未使用的字段,违反了dead_code检查;还有一个是关于函数名不是蛇形命名法,违反了non_snake_case检查。这些警告之所以出现,是因为它们的默认级别被设置为warn。

然而,我们可以通过添加检查属性来覆盖这些检查的默认级别。例如,我们可以直接在Point结构体、get_random_point函数和bar变量上添加allow检查属性,或者在main.rs顶部添加整个crate级别的allow检查属性。这些检查级别的覆盖将影响crate中的所有代码。注意,在添加crate级别的属性时,必须在#号后使用感叹号。

如果我们再次运行cargo check,我们就不会看到任何警告了。

覆盖默认检查级别有几个原因:

在原型开发时,我倾向于禁用与未使用代码相关的检查,这样我就可以专注于处理编译时错误。这可以通过指定单个检查或检查组来实现。例如,我们可以将unused组中的所有检查级别更改为allow,这包括dead_code检查和unused_variables检查。

对于生产代码,你可能想将unused检查组的级别更改为deny,这样未使用的代码就会导致编译时错误。此外,你还可以将non_standard_style检查组的级别更改为deny。

做出这些更改后,运行cargo check将产生几个错误。要修复这些错误,我们需要将函数名更新为蛇形命名法,使用bar变量,并确保Point结构体的x和y字段被使用。

现在我们已经介绍了代码检查的基础知识,让我们讨论一下Rust自带的另一个工具,叫做Clippy。Clippy是一系列检查的集合,用于捕捉常见错误并改进你的Rust代码。它是rustc检查的超集,实际上它在内部运行cargo check,而且比rustc更加固执己见。当你通过rustup安装Rust时,Clippy也默认包含在内。

如果我们对之前的代码示例运行cargo check,我们不会看到任何警告。但是,如果我们运行cargo clippy,我们会得到一个警告,指出我们使用了被列入黑名单的变量名foo。

正如你所看到的,Clippy更加固执己见,这会导致更多的检查警告。Clippy还提供了一些额外功能。例如,你可以运行”cargo clippy –fix”来自动应用一些检查建议。此外,除了通过属性更改Clippy检查级别外,你还可以在一个名为clippy.toml的单独文件中配置检查级别。

要了解更多关于Clippy功能以及如何向Clippy添加新检查的信息,请查看Clippy的GitHub仓库。

如你所见,Rust为我们提供了出色的代码检查工具来改进我们的Rust代码。在你离开之前,请确保通过访问letsgetrusty.com/cheatsheet获取你的免费Rust速查表。说完这些,我们下次再见。

Linting Rust Code With Clippy CLI Rules 🤯🦀 Rust Programming Tutorial for Developers

大家好,我叫Trevor Sullivan,欢迎回到我的视频频道。非常感谢你们加入我们的另一个Rust编程教程系列视频。

在这个特定的视频中,我们将重点关注一个工具,你可以在Rust工作流程中使用它来编写更健壮和高性能的代码,而且你还可以通过使用这个工具来学习更多关于Rust编程语言的知识。这个叫做Clippy的工具通常包含在Rust安装中,当你使用rustup安装程序安装Rust工具链时,它包括了rustdoc CLI、cargo CLI和Rust编译器rustc。

Clippy是一个非常酷的工具,因为它能够检查你的代码文件,并就如何改进你的代码项目提出建议。最终,当你使用Clippy来帮助指导你编写Rust代码时,你会得到更多的代码一致性,可能会得到一些你甚至不知道可能的新Rust代码技术的建议,而且你还可以确保在团队环境中的一致性。当你开始有多个团队成员都在同一个项目上工作时,这是一个非常重要的事情。你知道,一个团队成员可能更喜欢某种语法或使用某种方法,但另一个团队成员可能使用不同的机制来完成同样的任务。因此,通过使用Clippy,它提供了这些护栏,以确保你的整个团队都在遵循相同的Rust代码编写最佳实践。

正如我提到的,Clippy是Rust工具链的一部分。然而,如果你安装的是最小版本的Rust工具链,那么这个组件可能没有被安装。事实上,如果你安装的是最小版本的工具链,它很可能没有被安装。但幸运的是,如果你使用的是rustup安装程序,你可以简单地指定你想要在你的Rust安装中添加Clippy组件,那就会在你的环境中安装Clippy,然后你就可以随时调用Clippy了。

你可以在命令行中使用Clippy,当你构建Rust代码时,但你也可以将Clippy作为CI/CD管道的一部分。所以,每当你的团队成员进行git commit然后git push到你的代码仓库时,作为在那个push事件期间被触发的CI/CD管道的一部分,你可以执行Clippy工具。你可以在配置文件以及命令行参数中设置一些配置选项,然后如果Clippy在你的代码中发现任何问题,你实际上可以中止你的管道的执行,让开发人员回去修复任何代码问题,然后继续更新,再次进行git commit和git push,并修复这些类型的问题。

所以你可以把Clippy作为一种学习工具,你可以把它作为一种把关工具(以一种好的方式,而不是坏的方式),你可以帮助确保你的团队正在编写一致的、高质量和高性能的代码。现在有超过650个lint规则。我知道Clippy文档书中说有600个lint规则,但如果你查看Clippy的开源仓库,你可以看到在他们最新版本的readme中,他们确实自豪地声称有超过650个lint规则可用。

当我们谈论linting时,linting基本上就是当一个软件工具检查你的源代码文件,并寻找任何可能的改进或你可能遇到的任何潜在错误。这有助于你尽早在开发生命周期中捕获错误。你真的不想在构建过程中可能花20分钟,然后才意识到你的代码中有一个问题。如果你能尽早从Clippy那里得到即时反馈会好得多,这样一旦你在代码中遇到某种警告或错误,你就可以立即回去修复它,推送更新的代码,并尽快得到反馈。

所以我强烈建议使用Clippy,你可能会想,作为一个Rust初学者,为什么我想引入更多的护栏?为什么我想引入更多的问题,当我已经在试图学习Rust编程语言,可能已经遇到了一堆障碍?好吧,再次强调,这将根据这些linting规则提供指导。这些规则不仅被配置为检测问题,还提供建议并加深你对这些特定问题的理解,这样下次你编写类似的代码时,你就可以以更好的方式编写代码。所以我实际上认为,在你的Rust学习周期中,从这些工具中得到这些错误和警告是一件积极的事情,因为你能够有一个工具为你提供那些护栏。所以把这看作是一种会伴随你,帮助你提供更好的Rust代码编写建议的东西,而不是把它看作是一种阻碍或阻挡你的东西,因为它实际上最终是在帮助你编写更好的Rust代码。

现在这650个lint规则是很多不同的规则,它们在Clippy中被分类成这些默认类别。我们有clippy::all, clippy::correctness, clippy::suspicious, clippy::style, clippy::complexity, clippy::performance相关的东西,默认情况下,每个这些类别都应用了某些警告或允许级别。例如,如果你看一些围绕cargo manifest文件的东西,那些默认情况下是被允许的,但如果你想让特定的规则被捕获为警告或实际上阻止执行并在你运行Clippy工具时出错,那么你可以把这里的默认级别从allow改为warn或deny。我们稍后会更详细地讨论这些级别,但基本上每个这些类别都被分解成这些针对你的代码中非常精确的建议的单独规则。

所以它会在你的代码中寻找某些模式,它会寻找某些方法调用,它会查看你传入函数的参数数量,或者也许你的函数代码有多长,诸如此类的事情。其中一些事情实际上也有可定制的参数。实际上有一个单独的配置文件,我们可以在Clippy中使用,来告诉它如何应用这些不同的规则。

如果我们看一下这个配置部分,这里有一个特殊的文件,你可以添加到你的项目中,叫做clippy.toml,或者你可以用.clippy.toml来隐藏它。它会在你的项目目录中寻找那个文件,或者你实际上可以指定一个环境变量来定位那个配置文件,或者你可以指定这个manifest目录,你的cargo manifest所在的位置。但默认情况下,它只会在你的shell指向的当前目录中查找。所以这是使用它的最简单方式,而不是必须设置这些环境变量中的一个。

但在这个配置文件内部,它基本上就是一个ini或toml类型的文件格式,但它实际上没有任何标题。所以你没有那些带有标题的方括号,就像你在cargo.toml文件中那样。我们所做的就是设置一个变量,我们将变量设置为某个值,你可以在实际lint的文档中找到所有这些输入配置选项或这些变量以及它们支持的值。

所以如果我们看一下这里的readme,然后点击这个链接去到linting规则页面,这会直接带我们到这个Clippy lint页面。这会向你展示所有这些单独的规则,如果你愿意,你实际上可以按组过滤它们。所以我们可以说我只想看与我的cargo.toml配置相关的东西,或者也许我想看与性能相关的东西。所以我’ll只点击none,然后包含performance组,正如你所看到的,这里有相当多的与性能相关的规则。

然后一旦你找到一个规则,比如说让我们看一下to_string,例如,如果你正在使用println!宏,你正在输入一个字符串,你在它上面调用to_string方法以便将其转换为字符串,这个规则基本上是在检查那个,并说嘿,你实际上不需要调用to_string,因为你传入println!宏的东西已经实现了Display trait,所以因此println!宏能够解析如何将那个对象输入作为字符串表示。所以基本上它只是建议你消除这个to_string方法调用,这将提供更高性能的代码。

现在正如我提到的,Clippy是Rust工具链的一部分,然而,如果你安装的是最小版本的Rust工具链,那么这个组件可能没有被安装。事实上,如果你安装的是最小版本的工具链,它很可能没有被安装。但幸运的是,如果你使用的是rustup安装程序,你可以简单地指定你想要在你的Rust安装中添加Clippy组件,那就会在你的环境中安装Clippy,然后你就可以随时调用Clippy了。

现在你可以在命令行中使用Clippy,当你构建你的Rust代码时,但你也可以将Clippy作为你的CI/CD管道的一部分。所以每当你的团队成员做一个git commit然后git push到你的代码仓库,作为在那个push事件期间被触发的CI/CD管道的一部分,你可以执行Clippy工具。你可以在配置文件以及命令行参数中设置一些配置选项,然后如果Clippy在你的代码中捕获到任何问题,你实际上可以中止你的管道的执行,让开发人员回去修复任何代码问题,然后继续更新,再次进行git commit和git push,并修复这些类型的问题。

所以你可以把Clippy作为一种学习工具,你可以把它作为一种把关工具(以一种好的方式,而不是坏的方式),你可以帮助确保你的团队正在编写一致的、高质量和高性能的代码。另一件很好的事情是Cargo可以实际上自动修复一些这些问题。不幸的是,我没有看到任何种类的具体性在这里来确定一个规则是否可以自动修复,所以你将不得不玩一下个别规则来弄清楚它们是否支持自动修复,但有一个叫做–fix的参数,你可以传递给cargo clippy命令,那将自动触发任何这些问题的修复,如果它们支持自动修复的话。

我们也有这些linting级别。我之前简要提到过这些,并说我们会更深入地看一下它们。有allow、warn、deny和none,但实际上还有几个其他级别。所以如果我们回去看一下Clippy文档,看一下我认为它在使用部分下面,也许让我们看看它是否在这里,让我快速搜索一下,搜索levels。

无论如何,这些级别允许我们指定像force_warn和force_deny这样的东西,后者也被称为forbid。我不完全确定它在文档中的哪里,但有一个force_warn和一个forbid选项,你也可以设置,那些在这个接口中没有显示,但你可以使用它们来基本上防止有人覆盖那些特定的规则。

现在你实际上如何启用这些规则呢?有两种不同的方式可以做到这一点。第一种是你可以直接进入你的源代码,使用一个属性来配置这些lint。另一件你可以做的事是实际上在clippy命令行上指定linting规则。所以当你运行cargo clippy来调用Clippy工具时,你实际上可以指定你想要它在你的代码中警告或拒绝访问的规则。

所以这个例子右在这里要向你展示如何基本上拒绝所有规则。这是一个属性语法,你可以在整个模块或整个crate上使用,如果你在你的lib.rs文件中指定的话。所以你可以使用这个基于属性的语法,但你也可以使用这个语法,我们可以用-A允许,也有一个–allow是拼出来的。我通常更喜欢使用拼出来的参数,因为如果你在读你的代码时,它们更清晰,因为-a是相当模糊的,那可能意味着很多不同的东西,它可能意味着all,它可能意味着allow,它可能意味着一大堆不同的东西。所以我通常喜欢把这些拼出来。

同样,–warn或-W是你如何指定警告级别,如果你想拒绝某些东西,你实际上可以使用-D或–deny,然后指定规则的名称。你会注意到,当你指定一个规则的名称时,它需要以clippy和一个双冒号作为前缀,然后你可以指定规则的名称。所以如果你在这个文档中查看lint列表,只要记住,如果你在命令行中使用它,你需要使用以clippy双冒号为前缀的lint名称。

无论如何,我们将看一下一些这些规则,以及我们如何将它们纳入

好的,我会继续翻译和整理剩余的内容:

默认情况下,它只会在您的shell指向的当前目录中查找。所以这是使用它的最简单方法,而不是必须设置这些环境变量之一,但在这个配置文件中,它基本上只是一个ini或toml类型的文件格式,但它实际上没有任何标题,所以您没有那些带有标题的方括号,就像在cargo.toml文件中那样。我们所做的只是设置一个变量并将变量设置为某个值,您可以在实际lint的文档中找到所有这些输入配置选项或这些变量以及它们支持的值。

所以如果我们看一下readme,然后点击这个链接到linting规则,那会直接带我们到这个Clippy lint页面,这将向您显示所有这些单独的规则,如果您愿意,您实际上可以按组过滤它们。所以我们可以说我只想看与我的cargo.toml配置相关的东西,或者也许我想看与性能相关的东西。所以我只需点击none,然后包括performance组,正如您可以看到的,这里有相当多的与性能相关的规则。然后一旦您找到一个规则,比如说让我们看一下to_string,例如,如果您正在使用println!宏,并且您正在输入一个字符串,并且您在其上调用to_string方法以将其转换为字符串,这个规则基本上是在检查这一点,并说嘿,您实际上不需要调用to_string,因为您传入println!宏的东西已经实现了Display trait,因此println!宏能够解析如何将该对象输入转换为字符串表示。所以基本上它只是建议您消除这个to_string方法调用,您的代码仍然会完全正常工作。

现在这是只抱怨未使用的变量,但让我们看看我们是否可以强制它失败。所以如果我做一个cargo然后clippy命令,那将调用linter,正如您可以看到的,当我用cargo clippy调用clippy时,它将查看我们在源文件中声明的这些基于属性的规则,它将解析这些,它将查找这些linting规则的任何配置选项,然后使用静态分析评估我的代码,以查看是否有任何问题,正如您可以看到的,它说错误此函数参数太多。它说规则表明我们想要七个,再次,这是默认值,我没有在我的clippy配置中定制它,但我们实际上有八个参数。

所以现在让我们说我想自定义参数数量,这将导致违反这个规则。另外,再快速提一下,如果我们在bash中做Echo $?,您可以看到我们得到一个退出代码101。所以如果您在CI/CD管道中,并且您在那里做自动化,您可以检查该退出代码,看看它是101还是非零,这将表明在clippy linting中发生了某种错误,然后您可以立即中止构建过程,中止CI/CD管道,如果您使用SaaS供应商做CI/CD并且按分钟付费,比如GitHub Actions或者可能是Circle CI或Travis CI之类的,这将为您节省大量CI/CD分钟。所以这是一种很好的方法来检测是否有任何问题。

我们也可以把这个改成warn,说不是deny而是我只想警告这个,然后如果我们警告,我们不会得到非零退出代码,我们只会得到零退出代码,但在标准输出中我们仍然会看到有一些建议。

所以现在我们要做的是自定义参数数量。这个too_many_arguments lint规则的默认值是七个参数,所以我们放了八个参数来违反规则,但是让我们说我们想自定义它,说我想要最多四个参数。

所以再次,我们将抓取这个配置选项,它允许我们自定义这个特定的too_many_arguments规则,我们将在我们的项目中创建一个新的配置文件,我们将其称为.clippy.toml,您不必在开头加上句点,它也会查找clippy.toml,然后我们需要做的就是粘贴那个设置并将其设置为我们想要的值。所以我们的函数最多可以有四个参数,如果我们违反了这一点,那么我们将对该问题发出警告或者我实际上会把它改回deny。

所以现在我们将做cargo clippy,正如您可以看到的,我们有八个,但它现在只想要四个。这就是我们如何将该自定义选项应用于这个lint规则。所以现在如果我消除这些额外的参数,我将把它们放在一个注释中,以防我需要把它们放回去。

现在我们可以从这里的输入中消除这些,现在这应该满足规则。所以如果我们再次做cargo clippy,您可以看到我们得到了关于未使用变量的警告,但我们不再得到警告或者说拒绝,而是说我们有太多参数的错误。当然,如果我们回去再添加第五个参数并重新运行cargo clippy,那么我们将再次得到该错误以及101退出代码。

所以这就是我们如何应用属性来拒绝整个crate的这个特定规则,因为#!适用于父级,所以main.rs的父级是crate本身,所以这应该适用于我们的整个crate,我们也可以将东西应用到单个实体。所以如果我们想这样做,比如说我们想允许未使用的变量,对吧?

所以让我们做一些像未使用的东西,我们将搜索未使用的参数,我想,让我们搜索参数或未使用的变量,也许,我没有看到那个显示出来,但有一个允许未使用变量的,我不确定那个可能实际上不是一个clippy规则,但让我们看看这里可能有的其他类型的警告,看看我们是否能找到另一个。

所以,我认为另一个实际上是to_string,所以让我们继续搜索to_string,我认为那将是一个警告,一个与性能相关的类别警告。所以让我们继续尝试在一个例子中使用这个。所以如果我们有一个实现了Display trait的类型,我们在其上调用to_string,它应该捕捉到那个作为一个警告,正如您可以看到的,这里底部没有配置部分,所以这个特定设置没有额外的配置选项。所以我们要做的就是定义什么是这个to_string。

所以我们将定义另一个测试函数,我们将说test_string,让我们也修复这个,我们将继续删除那个第五个参数,这样它就满意了。所以我们可以在这里说我们想允许未使用的变量,所以让我们说let x等于5,我们也可以使用参数作为输入,比如arg1是一个无符号16位整数。

正如您所看到的,我们得到了未使用的变量,未使用的参数,再次得到了未使用的变量。所以如果我们只想在这个特定函数上允许未使用的变量,我们可以只使用一个#而不带感叹号,这将直接应用于属性定义后面的项目。所以我们可以说allow,然后在这里指定unused_variables,我不认为这是一个clippy规则,因为它没有以clippy为前缀,我也在clippy文档中找不到它。所以请注意,我认为那个只是内置在rust中。

所以现在您可以看到那些警告立即消失了,如果我们在这里运行cargo clippy,那么您可以看到我们只得到函数test_string从未被使用,但我们不会得到那些未使用变量的警告,就像我们在上面的test_lint函数中得到的那样。

所以如果我们注释掉那个属性并重新运行cargo clippy,我们应该在test_string函数上得到一个未使用变量,而之前它只报告test_lint函数上未使用的参数或变量。所以它抱怨我们没有使用test_string函数上的参数arg1,它抱怨我们没有使用我们在test_string函数体内声明的x变量,但我们想测试的规则是这里的to_string规则,对吧?

所以我们要做的是继续做一个println!操作,让我们做println!,我们将指定花括号,然后我们将传入我认为x,我不确定它是否明确实现了Display,但我猜它可能实现了,我们也可以使用一个字符串值,比如”hello from rust Trevor”,然后我们将传入x,是的,那很好,它是一个字符串,然后我们可以在这里做.to_string。所以现在我们正在取一个字符串切片,我们实际上通过调用to_string方法将其转换为堆分配的字符串。

所以现在如果我们做cargo clippy,那么您应该看到这个警告,它说to_string被应用到一个已经实现Display trait的类型上。所以这又是一个与性能相关的东西,它说嘿,您实际上不需要这个to_string方法调用,所以继续消除它,您的代码仍然会完全正常工作,对吧?所以一旦我们在这里重新运行,我们不再得到那个警告,这有助于通过默认提供那个linting护栏来提高我们应用程序的性能。

再次说明,该特定规则的默认级别是警告,所以它不会默认允许它,但有一些东西默认是允许的,您可以为它们启用警告或拒绝操作,比如这里的这些。如果您只是按允许规则过滤,那么这些是默认允许的类型的东西,但您可以在配置中启用它们,您实际上可以设置一个警告属性或一个拒绝属性。

为了在命令行指定这些规则,这是第二种方法。到目前为止,我们已经看了应用于crate级别、父级别和个别函数级别或个别结构级别、枚举和traits等的基于属性的语法,但如果我们想在我们的CLI中包含其中一些规则,我们可以做cargo clippy,看一下帮助,然后我们可以警告、允许、拒绝或禁止我们代码中的某些东西,禁止将是最严格的,因为那将基本上忽略您有的任何覆盖,所以那是如果您想在代码中禁止某些东西的最严格选项,但让我们只说现在,我们想也许允许,让我们看看,让我们允许这里的to_string函数,对吧?

所以我们将再次做x.to_string,但这次我们将继续指定我们想在我们的clippy CLI中允许它,而不是将其指定为基于属性的语法。所以我们要做的是说cargo clippy,然后在这里放两个破折号,因为那将阻止任何参数被传递给cargo,两个破折号之后的任何东西都将被clippy本身解释,而不是cargo,所以我们要做的是说我们想也许允许clippy,然后它叫什么,让我们在这里对to_string警告做一个过滤。所以它叫做to_string_in_format_args,所以我们基本上想做clippy::然后to_string_in_format_args。

让我看看我能不能从某个地方复制那个,我只会在这里复制它,我们会点击那个按钮,然后我们会做clippy::然后粘贴那个,哦,当然,当我复制它时,它包含了前缀。所以现在如果我们运行这个,您可以看到我们没有得到那个特定的警告,如果我们运行时没有那个参数,我们确实得到这个警告,说to_string被应用到已经实现Display trait的类型,但如果我们用–allow运行,那将消除那个警告的出现。也如果我们想把那个警告改成拒绝,我们可以简单地指定–deny而不是allow,所以现在这将在clippy调用期间导致一个错误,那将早早地捕获那个规则,现在我们得到一个101退出代码。所以再次,我们可以中止我们的管道。

所以这些真的是应用事物的两种方式,我们可以在属性级别做到这一点,以获得整个crate的某种全局范围,我认为在模块范围内也可以工作,所以如果您有一个子模块,您可以将这个属性插入到那里,我想那可能会工作,然后我们也可以使用这个语法来应用到直接跟随的元素,当我说元素时,当然我指的是函数、traits、枚举、结构体等等。

好的,让我们看看clippy的快速修复或自动修复。你可能早些时候注意到,当我们做cargo clippy –help时,cargo CLI实际上有一个–fix选项。所以你要把这个传递给cargo而不是clippy,所以你要在双破折号之前放它,那将是一个传递给cargo的选项,双破折号之后的任何东西都会传递给clippy特别地。所以如果我们做cargo clippy –fix,然后在那之后插入我们的clippy选项,那么我们就可以应用

我理解您的要求。我会按照原文的顺序整理内容,使其更加合理通顺,同时保留所有细节,不会省略或总结任何内容。然后我会将整理后的内容翻译成中文。以下是整理和翻译后的内容:

让我们来看看Clippy的快速修复或自动修复功能。你可能注意到,当我们运行”cargo clippy –help”时,Cargo CLI实际上有一个”–fix”选项。你需要将这个选项传递给Cargo,而不是Clippy。也就是说,你要把它放在双破折号之前。双破折号之后的任何内容都会被传递给Clippy。

如果我们运行”cargo clippy –fix”,然后在后面添加我们的Clippy选项,我们就可以应用自动修复。让我们看看是否有一个自动修复可以移除这个to_string方法的调用。

目前的情况是,如果我们在这里使用deny,我们会在to_string这里得到一个错误,因为我们在命令行中将默认的警告改成了显式的deny。但现在,我们要在这些双破折号之前添加”–fix”。

你可以看到,默认情况下它会警告我们有未提交的更改。但是,很像发布操作(我们可能还没有讨论过向crates.io注册表发布crate),我们可以指定一个叫做”allow-dirty”的参数。这可以防止我们在运行这个命令时需要提交更改。所以我们可以直接说”allow-dirty”。

我认为这是传递给Cargo而不是Clippy的参数,所以让我们把它移到双破折号之前。我们把双破折号放在它后面,现在我们应该有一个修复了。

你可以看到,这自动修复了我们的代码。我们可以用Ctrl+Z来撤销这个更改,然后如果我把这个稍微往下移一点,重新运行cargo clippy命令,你可以看到它自动为我修复了这个问题。

我不必手动修复它,因为也许我的代码中有100或200个不同的地方不必要地调用了to_string,这将处理并修复所有这些实例,这样我就不必手动寻找并解决它们。这是一个真正的时间节省器,你可以通过Clippy CLI工具获得。

如你所见,它非常强大。这里有许多不同的规则,分布在这些不同的组中。这种分组真的很好,因为如果你想查找与性能相关的问题,比如你可能遇到了一些性能问题,想要专门解决性能问题,你可以只过滤这些基于性能的规则,看看你能找到什么。

让我清除一下那个搜索,所以这里所有的规则都是与性能相关的。我们可以对所有这些不同的规则运行Clippy,看看它们是否适用。此外,如果这些规则中的任何一个有配置选项,比如这个,我们也可以在我们的clippy.toml文件中指定这些选项。

我强烈建议你亲自动手使用Clippy。也许对一个现有的Rust项目运行Clippy,如果你已经开始构建自己的Rust项目,看看它会抛出什么样的警告或错误。

也要确保将Clippy纳入你的CI/CD工作流程中。如果你使用GitHub Actions、GitLab CI/CD或者可能是OneDev CI/CD,进入你的配置文件,将这个Clippy命令作为你流程中的早期步骤添加进去,以便尽早在你的管道中捕获这些错误,这样你就不用等待很长时间构建完成,然后才意识到你有一些问题。

强烈建议你玩玩Clippy,玩玩这些不同的规则,玩玩这些不同的配置选项。你可以使用CLI或者基于属性的语法来应用规则更改。如果你决定走CLI路线,当然你会在这里指定这些CLI参数,在你的CI/CD配置文件中,无论你在CI/CD管道中运行的是什么脚本,你基本上就是在你调用Clippy的CLI脚本中添加所有这些deny和allow参数。

然后你当然可以始终检查退出代码101,看看是否有问题,然后你可以中止管道,或者你可以只发送一封电子邮件或Slack消息给你的开发团队,表明Clippy捕获到了一些问题,然后如果你觉得这种方法可以接受的话,可以继续构建。但是,这完全可以根据你正在编写的每个Rust项目进行定制。

无论如何,我想这就是我想要涵盖的关于Clippy工具的所有内容。我强烈建议,特别是初学者使用它,因为它确实能帮助你编写更好的代码,帮助你更多地了解Rust语言和标准库,它只是一个很棒的工具,可以帮助提高你的Rust构建的效率。

再次,如果你从这个视频中学到了什么,请给这个视频点赞,请在下面留言,让我知道你的想法,并订阅频道,点击那个铃铛图标,这样你就能在新视频发布时收到通知。我很想听听你对整个播放列表的看法。感谢观看,我们很快会再见。保重。

hey guys my name is Trevor Sullivan and

welcome back to my video channel thanks

so much for joining me for another video

in our rust programming tutorial series

now the topic that we’re going to be

focusing on in this particular video is

actually a tool that you can use in your

rust workflow in order to write more

robust and higher performance code plus

you can actually learn more about the

rust programming language by using this

tool now typically this tool called

clippy is included with an installation

of rust when you use the rust up

installer in order to install your rust

tool chain which includes things like

the rust dock CLI the cargo CLI and the

rust compiler which is rust C now clippy

is a really cool tool because it is able

to kind of inspect your code files and

make recommendations on what you can

improve in your code project so

ultimately when you use clippy to help

provide guidance to you when you’re

writing your rust code you get more code

consistency you get recommendations

about maybe new techniques in your rust

code that you didn’t even know were

possible and you can also ensure

consistency across a team environment

this is a really important thing when

you start to have multiple team members

that are all working on a similar

project you know one team member might

prefer a certain syntax or they might

use a certain method but a different

team member might use a different

mechanism in order to accomplish the

same task and so by using clippy it kind

of provides these guard rails to make

sure that your entire team is on board

and following the same best practices

for writing rust code now as I mentioned

clippy is part of the rust tool chain

however if you installed the minimal

version of the rust tool chain then this

component may not have been installed in

fact it probably wasn’t installed if you

installed the minimal version of the

tool chain but thankfully if you’re

using the rust up installer here you can

simply specify that you want to add the

clippy component into your rust

installation and that’ll get clippy

installed in your environment and then

you can go ahead and call clippy anytime

that you want to now you can use clippy

at the command line while you’re

building your rust code but you can also

incorporate clippy as part of your CI CD

pipelines so anytime that one of your

team members does a git commit and then

get push up to your code repositories as

part of that CI CD pipeline that gets

kicked off during that push event you

can execute the clippy utility you can

set up some configuration options as

well in a configuration file as well as

command line parameters and then if

clippy catches any issues inside of your

code you can actually abort execution of

your pipeline and have the developer go

back and fix whatever code issue there

is and then go ahead and update do

another git commit git push and fix

those types of issues so you can use

clippy as kind of a learning tool you

can use it as a gatekeeping tool in a

good way not a bad way and you can help

just ensure that your team is writing

consistent and high quality and high

performance code now there are over 650

lints I know the clippy documentation

book here says 600 lengths but if you

check out the open source repository

here for clippy you can see that in

their latest version of their readme

they do boast having over 650 linting

rules available and when we talk about

linting linting is basically just when a

software utility inspects your source

code files and looks for any potential

enhancements that you could make or any

potential errors that you could run into

and this helps you to catch errors as

early in your development life cycle as

possible you don’t really want to get

you know maybe 20 minutes into a build

process and then realize later down the

line that you have an issue with your

code it would be a lot better to get

that immediate feedback from clippy

early on in the development process so

that as soon as you run into some kind

of warning or error inside of your code

you can go back fix that immediately

push the updated code and get that

feedback as quickly as possible so I

highly recommend using clippy and you

might be thinking as a rust beginner

well why do I want to introduce more

guard rails why do I want to introduce

more issues when I’m already trying to

learn the rust programming language and

maybe hitting a bunch of brick walls

well again this is going to provide a

guidance based on these linting rules so

the rules are configured to not only

detect issues but also to provide

recommendations and to deepen your

understanding of what those specific

issues are so that the next time that

you write similar code like whatever you

caught previously with one of these

linting rules you can write code in a

better way so I actually think that

getting these errors and warnings from

these utilities is a positive thing in

your learning cycle of rust because you

are able to have a tool that’s providing

those guard rails for you so look at

this as something that’s going to kind

of come alongside you and help provide

better recommendations on how to write

rust code rather than looking at it as

an inhibitor or something that’s

blocking you because it’s actually

helping you ultimately to write better

rust code now these 650 lints those are

a lot of different rules and they are

categorized into these default

categories in clippy so we’ve got clippy

all we’ve got clippy correctness we’ve

got suspicious style complexity

performance related things and by

default there are certain warning or

allow levels that are applied to each of

these categories so for example if you

look at something around the cargo

manifest files right those by Def

defaults are going to be allowed but if

you want specific rules to be caught as

warnings or actually block execution and

error out when you run the clippy

utility then you can change the default

level here from allow to maybe warn or

deny we’ll talk a little bit more about

those levels in just a moment but

basically each of these categories are

broken down into these individual rules

that Target very precise recommendations

inside of your code so it’ll look for

certain patterns in your code it’ll look

for certain method calls it’ll look at

the maybe the number of arguments that

you’re passing into a function or maybe

how long your function code is and

things of that nature and some of these

things actually have customizable

parameters on them too there’s actually

a separate configuration file that we

can use in clippy to kind of tell it how

to apply these different rules so if we

take a look at this configuration

section right here there’s a special

file that you can add into your project

called clippy.tomel or you can hide it

with Dot clippy.tomel and it’s going to

look for that file in your project

directory or you can actually specify an

environment variable for where to locate

that configuration file or you can

specify this manifest directory where

your cargo manifest is located but by

default it’s just going to look in the

current directory wherever your shell is

pointing to so that’s kind of the

easiest way to use it rather than having

to set one of these environment

variables but inside of this

configuration file it’s basically just

an ini or Tamil kind of file format here

but it doesn’t really have any headings

so you don’t have those square brackets

with the headings like you do in the

cargo.tomo file all we do is we set a

variable and we set the variable to a

certain value and you can find all of

these input configuration options or

these variables and the supported values

for them in the documentation for the

actual lints themselves so if we take a

look at the read me over here and then

click on this link to go to the linking

rules here that’ll take us directly to

this clippy lints page here and this is

going to show you all of these

individual rules and if you’d like to

you can actually filter them by group

here so we could say I would just want

to see things related to my cargo.tommel

configuration or maybe I want to see

things that are performance related so

I’ll just click on none and then include

the performance group right here and as

you can see there are quite a few

performance related rules right here and

then once you find a rule like maybe

let’s take a look at uh two string for

example so if you are using the print

line macro here and you are taking a

string as input and you call the

tostring method on it in order to

convert it to a string this rule is

basically checking for that and saying

hey it’s not necessary for you to call

tostring because the thing that you’re

passing in to this print line macro

already implements the display trait and

so therefore the print line macro is

able to decipher how to convert that

object input as a string representation

so basically it’s just going to

recommend that you eliminate this two

string method call and that will provide

higher performance code now another nice

thing about cargo is that it can

actually fix some of these issues

automatically unfortunately I didn’t see

any kind of specificity in here to

determine if a rule is automatically

fixable or not so you’ll kind of have to

play around with the individual rules to

figure out if they are supported for

automated fixing but there’s a parameter

called dash dash fix that you can pass

in to the cargo clippy command and that

will automatically trigger a fix for any

of these issues for you if they support

an automated fix also we’ve got these

linting levels so I mentioned these

briefly earlier and said we’d take a

deeper look at them there’s allow warn

deny and none but there’s actually a

couple of other levels as well so if we

go back and take a look at the clippy

documentation and take a look at I think

it’s under the usage section here maybe

let’s see if it’s in here let me just do

a quick search and search for levels

here

so in any case the levels allow us to

specify things like force Warn and force

deny it which is also known as forbid as

well I’m not entirely sure where that is

in the documentation here but there’s a

force worn and a forbid option that you

can set as well and those are not

displayed inside of this interface here

but you can use those to basically

prevent somebody from overriding those

particular rules now how do you actually

enable these rules well there’s really

two different ways that you can do this

number one is you can actually go

directly into your source code and you

can use an attribute in order to

configure these lints and the other

thing that you can do is actually

specify the linting rules on the clippy

command line so when you run cargo

clippy to invoke the clippy utility you

can actually specify which rules you

want it to either warn or deny the

access to in your code so this example

right here is going to show you how to

basically deny all rules this is an

attribute syntax that you would use

across an entire module or across an

entire crate if you are specifying that

in your lib.rs file so you can use this

attribute-based syntax but you can also

use this syntax down here where we can

allow with Dash capital A there’s also a

dash dash allow that’s kind of spelled

out I generally prefer to use the

spelled out parameters just because

they’re more clear if you’re reading

your code because Dash a is pretty

ambiguous that could mean a lot of

different things it could mean all it

could mean allow it could mean a whole

bunch of different things so I generally

like to spell these out also dash dash

warn or Dash capital W is how you can

specify a warning level and if you want

to deny something you can actually use

Dash capital D or dash dash deny and

then specify the name of the rule and

you’ll notice that when you specify the

name of a rule it does need to be

prefixed with clippy and then a double

colon here and then you can specify the

name of the rule so if you’re looking at

the list of lengths in this

documentation right here just remember

that you need to use the lint name

prefixed by clippy double colon if

you’re using it inside of the command

line when you run cargo clippy so in any

case we’re going to look at a couple of

these rules and how we can incorporate

them into our code and we’ll take a look

at the parameters on the cargo clippy

CLI tool so that you can understand how

it works in practice as well as the

automated fixes that we can apply to our

code in order to fix changes or fix

issues in our code without having to go

and manually seek out those issues and

fix them on a line by line basis which

can ultimately save you a lot of time

when you’re working on a large project

but before we jump into our example I

just wanted to invite you to subscribe

to my YouTube channel here at

youtube.com Trevor Sullivan I’m an

independent content creator so any kind

of support that you can provide to this

channel is extremely helpful in the

pinned comment below I’ll leave some

links that you can use as affiliate

links and you can optionally use those

to do some online shopping and if you

make a purchase through there I’ll get a

small portion of the proceeds of that

particular sale so please consider doing

that also you can go out to my channel

here and find the rust programming

tutorial playlist we’re up to 28 videos

so this will be video number 29 and

there’s a whole bunch of rust Concepts

inside of this playlist here that we go

really into depth and Hands-On with so

feel free to check out this entire

playlist here and you know pick up any

knowledge that maybe you have a gap on

if you need threading knowledge if you

need to learn about iterators and how to

kind of work with the different methods

on there if you want to learn about hash

maps and hash sets there’s a whole bunch

of different Topics in here so feel free

to go through this playlist also if you

learned something from this video please

leave a like on the video that helps to

kind of promote to this video in the

algorithm and also leave a comment down

below and just let me know what you

thought of this video the style and

format and also let me know what other

kind of rust topics you want to see on

my channel and in this playlist right

here so with that out of the way let’s

go ahead and spin up a brand new project

and we’ll take a look at how to use

clippy so I’m going to switch over to my

integrated development environment which

is Microsoft Visual Studio code I’m

running it on Windows 11 that’s my

development workstation locally but as

you probably have seen in my earlier

videos especially the first video I talk

about how to set up a remote development

environment against a remote Linux

server so I actually have a virtual

machine running with lexd which I have

another playlist series on setting up

Lex D on a bare metal Linux server and

this virtual machine is just running a

headless Ubuntu Server distribution

using the latest LTS distribution of

Ubuntu and I have vs code remotely sshed

into that virtual machine so when I run

a shell here and do use things like rust

C then this is actually going to be

running on a Linux distribution rather

than on my local Windows system and this

is really nice because it allows me to

kind of switch back and forth between

different systems and I have a

consistent development environment so if

I go use my laptop upstairs you know I

can just load up my SSH session that I

was working on on my development

workstation in my office and I can just

pick up where I left off so I’d strongly

recommend that you invest some time into

learning how to set up that remote

development environment because in the

long run it’ll make you a more

productive developer but as you can see

I have rust installed here I’ve got the

latest version of rust 1.72.1 and rust

up is how you typically install rust

especially on a Linux server and if we

take a look at our components here we

want to basically verify that clippy’s

installed so what we can do is say rust

up and then go into this component

context and then we can say list

installed and available components so

just do a rust up component list and

then if you do a shift Ctrl F I think or

maybe just control F in here and then

search for clippy you can see that the

cargo components installed the clippy

component is installed rust docks are

installed rust source is installed and

there are a bunch of components that I

don’t need on this particular system

that aren’t installed but any of these

bolded items here that have installed at

the end are going to be present on your

system if you want to inspect the file

system to verify that clippy is

installed then you can actually just go

to your home directory

so we’ll say dollar home and we’ll go

into the dot rust up folder here then we

can go into the tool chains folder right

here and you can see I’ve got the stable

tool chain I don’t have nightly

installed on this system and then if we

go into that specific tool chain version

we can take a look at bin and inside of

the bin directory you should see that

there’s a cargo Dash clippy binary that

you can execute so if we execute the

cargo CLI and then run clippy afterwards

then that’s going to be how we invoke

this utility so if we do cargo clip e

dash dash help you can see we’ve got

this dash dash fix parameter right here

that allows us to automate fixes in our

source code it also shows us how to use

this attribute here to allow or deny or

warn on certain linting rules in our

code and then these options right down

here are going to allow us to at the

command line when we actually run cargo

clippy we can specify warn allow deny

and forbid as additional customizations

for which rules we want to enable or

disable right and then don’t forget

about the configuration file we’ll take

a look at some specific configuration

options as we go through the individual

rules but if we do something like let’s

search for args down here and go to all

lint levels and we want to look for one

called the number of arguments

so if we take a look at I think it’s

number of arguments or argument count or

something like that too many arguments

so let’s do that two many arguments so

in this particular rule here you’re

basically just inspecting the number of

arguments in a function and it has this

configuration section so when you’re

looking at a lint rule for clippy you

want to look at this configuration

section and see if it specifies any

additional configuration options and

then these configuration options are

what you can place into that Tamil

configuration file specifically for

clippy and that will customize how this

specific rule executes against your code

so let’s go ahead and write some simple

code in a project so we’ll start by

creating a project directory in here

we’ll just do a maker and then specify a

folder like lint Dash test

and then we’ll go ahead and open that up

so I’ll do Ctrl K Ctrl o and we’ll open

up the lint test folder in our editor

which will reload the window and get us

connected to that specific project so

now we have this empty folder and we’ll

just do a cargo in it in this directory

and now we have our simple scaffolded

out binary project here you could also

do a cargo init and specify dash dash

lib if you want to create a library

instead with lib.rs but for now I’m just

going to go ahead and create a binary

application with a main entry point so

now what we can do is start by

specifying some rules in our code using

attributes so let’s actually use this

example right here of too many arguments

and let’s say that we want to prevent or

deny a build of our code if we have too

many arguments so we want to use the

deny attribute in our code so we do a

hashtag and then an exclamation point

and then we can say deny

and then inside of this deny attribute

we can specify which linting rules we

want to block the build on so in this

particular case we can just hit control

space and see a list of all of these

different rules right here and so we

want to use the two many arguments rule

so we’ll pass in clippy double colon and

then two many arguments and that way if

we have more than by default seven

arguments you can see right down here

the documentation says it defaults to

seven so if we have eight arguments in

our function definitions then that will

breach this Rule and cause an issue so

for starters before we actually apply

the customization Let’s do let’s declare

a function

called test lint and inside of the

function declaration here

let me just call it so that it’s not

complaining about us not calling it here

and inside of the function definition

we’re going to specify eight arguments

so we’ll say ARG one is a u8 and then

I’ll just duplicate that two three four

five six seven eight times and we’ll

just rename these to arg2

ARG three ARG four

ARG five

six seven and eight all right so now we

have eight input arguments here and of

course it expects eight inputs I’ll do

one two three four five six seven eight

and so now

I should ultimately be blocking the

build based on these arguments here now

it looks like I have a little syntax

error here so let’s see what’s going on

right curly brace I have a left one over

there I have a right one over there not

really sure what’s going on here let me

just do a cargo build and it looks like

we have an unclosed delimiter somewhere

so where is that oh I killed off the

right curly brace for main so let’s go

ahead and include that and so now we

should be getting an error or a warning

it’s indicating that we have too many

arguments here

now this is just complaining about

unused variables here but let’s see if

we can force it to fail so if I do a

cargo and then clippy command that’s

going to invoke the linter and as you

can see when I invoke clippy with cargo

clippy it’s going to look at these

attribute based rules that we’ve

declared in our source file here and

it’s going to parse those it’s going to

look for any configuration options for

those linting rules and then evaluate my

code using static analysis in order to

see if there’s any issues and as you can

see it says error this function has too

many arguments it says the rule

indicates that we want seven again

that’s the default I haven’t customized

that in my clippy configuration but we

actually have eight arguments

so now let’s say that I want to

customize the number of arguments that

are going to cause a breach of this rule

also one more thing really quick if we

do Echo dollar question mark here in

bash you can see that we get an exit

code of one zero one so if you’re in a

CI CD Pipeline and you’re doing

Automation in there you can just inspect

that exit code and see if it’s 101 or

non-zero and that will indicate that

there was some kind of error that

occurred in the clippy linting and then

you can just immediately abort the build

process abort the CI CD Pipeline and

that’ll save you a whole bunch of CI CD

minutes if you’re using a SAS vendor to

do CI CD and you’re paying by the minute

like with GitHub actions or maybe Circle

Ci or Travis CI something like that and

so that’s a really nice way to just kind

of detect if there were any issues also

we can just change this to warn and say

instead of denying I want to just warn

on that and then if we warn we’re not

going to get a non-zero exit code we’ll

just get a zero exit code but in the

standard output we’re still going to see

that that there was some recommendation

here

so now what we’re going to do is to

customize the number of arguments so the

default for this too many arguments lint

rule was seven arguments so we put eight

arguments to breach the rule but let’s

say that we wanted to customize that and

say I want a maximum of about four

arguments

so again we’re going to grab this

configuration option right here that

allows us to customize this specific

rule for too many arguments and we’ll

create a new configuration file in our

project here we’ll call it dot clippy

dot Tamil and you don’t have to put the

period at the beginning it’ll look for

clippy.tomel as well and then all we

need to do is paste in that setting and

set it to the value that we want so our

functions can have a maximum of four

parameters and if we breach that then

we’re going to warn on that issue or

I’ll actually change it back to deny

so now we’ll do cargo clippy and as you

can see we have eight but it wants only

four now so that’s how we apply that

customization option to this lint rule

so now if I eliminate these extra

arguments here and I’ll just put them in

a comment in case I need to put them

back

now we can eliminate these from the

inputs here

and now this should satisfy the rule so

if we do cargo clippy again you can see

that we’re getting warnings about unused

variables but we’re no longer getting

the warning or the denial rather the

error saying that we have too many

arguments of course if we went back and

added ARG number five again and re-ran

cargo clippy then we are going to get

that error along with the 101 exit code

so that’s how we can apply the attribute

for denying this particular rule to the

entire crate because the hashtag

exclamation point applies to the parent

so main.rs is parent is the crate itself

so this should apply to our entire crate

and we can also apply things to

individual entities as well so if we

want to do that let’s say maybe we want

to allow unused variables right

so let’s do something like unused and

we’ll search for

unused argument I think

let’s do a search for argument or unused

variable Maybe

and I’m not seeing that show up here but

there is one for allowing unused

variables

I’m not sure maybe that one’s not

actually a clippy rule but let’s check

out some other types of warnings that we

might have right here and see if we can

find another one

so uh I think another one is actually

the two string so let’s go ahead and

search for tostring right here and I

think that that was going to be a

warning a performance related category

warning so let’s go ahead and try to use

this inside of an example so if we have

a type that implements the display trait

and we call tostring on it it should

catch that as a warning and as you can

see there’s no configuration section

here at the bottom so there’s no

additional configuration options for

this particular setting so what we’re

going to do is just Define

what is this two strings so we’ll Define

another test function here we’ll say

test string and let’s also fix this

we’ll go ahead and remove that fifth

argument there so that that’s Happy

so what we can say down here is that we

want to allow unused variables so let’s

say let x equal five we could also use

arguments as inputs like ARG one is a

unsigned 16-bit integer

and so as you can see we get unused

variable unused argument and unused

variable again right down here so if we

want to allow unused variables only on

this particular function here we can

just use a hashtag without the

exclamation point and that’s going to

apply to the item directly following the

attribute definition so we could say

allow

and then specify unused variables down

here and I don’t think this is a clippy

rule because it doesn’t get prefixed

with clippy here and I also couldn’t

find it in the clippy docs here so just

be aware that that one I think is just

built into rust here so now you can see

that those warnings immediately go away

if we run cargo clippy here then you can

see we just get function test string is

never used but we don’t get those unused

variable warnings like we do for this

function called test lint up here

so if we comment out that attribute and

rerun cargo clippy we should get an

unused variable here on the test string

function now and previously it was only

reporting on unused arguments or

variables on the test lint function so

it’s complaining that we’re not using

the argument ARG one on the test string

function and it’s complaining that we’re

not using the X variable that we

declared inside of the test string

function body but the rule that we

wanted to test out was the tostring rule

right down here

so what we’ll do is go ahead and just do

a print line

operation here let’s do print line we’ll

specify curly braces and then we’ll pass

in I think X I’m not sure if it

explicitly display implements display

but I’m guessing it probably does we

could also just use a string value like

hello from rust Trevor and then we’ll

pass in X yeah that’s fine it’s a string

and then we can do dot two string here

so now we’re taking a string slice and

we’re actually converting it into a heap

allocated string by calling the two

string method on it so now if we do

cargo clippy then you should see this

warning right here that says two string

was applied to a type that already

implements the display trait so this is

uh again a performance related thing

that’s saying hey you don’t actually

need this tostring method call here so

go ahead and eliminate it and your code

will still work perfectly fine right so

once we rerun this here we no longer get

that warning and that’s helping to

improve the performance of our

application by providing that linting

guard rail by default again the default

level for that particular rule is to

warn so it’s not going to allow it by

default but there are some things that

are allowed by default that you can

enable warnings or deny operations for

such as these right here

if you just filter by the allow rules

right here then these are types of

things that are be allowed by default

but you can enable them in your

configuration you can actually set a

warning attribute or a deny attribute so

in order to specify these rules at the

command line that’s kind of the second

way so far we’ve taken a look at the

attribute based syntax here that applies

at the crate level the parent level and

the individual function level or

individual struct level enums and traits

and that kind of thing but if we want to

include some of these rules in our CLI

here

we can do cargo clippy take a look at

the help and then we can warn allow deny

or forbid certain things inside of our

code and the 4-bit is going to be the

most strict because that’s not that’s

going to basically ignore any overrides

that you have so that’s uh that’s kind

of the most strict option if you want to

forbid things in your code but let’s

just say that for now

we want to maybe allow

let’s see let’s allow the tostring

function right here

so we’ll do x.2 string again but this

time we’re going to go ahead and specify

that we want to allow it in our clippy

CLI rather than

specifying it as an attribute based

syntax here

so what we’ll do is say cargo clippy and

then put dash dash here because that

will prevent any arguments from being

passed to Cargo anything after the dash

dash will be interpreted by clippy

itself not cargo and so what we want to

do is say we want to maybe allow

the clippy and then what’s it called

let’s do a filter on two string warnings

here so it’s called two string in format

args so we want to basically do clippy

double colon and then two string in

format args

so let’s see can I copy that from

somewhere here I’ll just copy it here

we’ll click that button and then we’ll

do clippy double colon and paste that in

oh of course it when I copied it

included the prefix there so now if we

run this

you can see that we don’t get that

particular warning if we run without

that parameter we do get this warning

saying that two string was applied to

the type that already implements the

display trait but if we run with the

dash dash allow here that will eliminate

that warning from popping up also if we

wanted to change that warning into a

deny we could simply specify dash dash

deny instead of allow and so now this is

going to cause an error during the

clippy invocation and that’ll catch that

rule early and now we get a 101 exit

code so again we can abort our pipeline

so those are really the two ways to

apply things we can do it at the

attribute level for kind of a global

scope here across the entire crate we

can do it I think that works at the

module scope as well so if you have a

sub module you could plug this attribute

into there I think that would probably

work and then we can also use this

syntax here in order to apply to the

element directly following and when I

say element of course I’m referring to

functions traits enums structs and

things of that nature

all right so let’s take a look at the

quick fixes or the automated fixes with

clippy so one of the things you might

have noticed from earlier is that when

we do cargo clippy dash dash help the

cargo CLI actually has a dash dash fix

option so you want to pass this into

cargo not into clippy so you want to put

it before the Double Dash and that’s

going to be an option that gets past a

cargo anything after the Double Dash

gets passed to clippy specifically so if

we do cargo clippy dash dash fix and

then plug in our clippy options after

that then we can apply automated fixes

so let’s see if there’s an automated fix

that’ll remove this invocation of the

tostring method

so right now the way things currently

stand is that if we run with a deny here

we’re going to get this error on

tostring here

because we changed it from a default

warning into an explicit deny on our

command line but now we’re going to go

right before these double dashes right

here we’re going to apply dash dash fix

to that and as you can see by default

it’s going to warn us about uncommitted

changes but very similar to doing a

publish operation which I don’t think

we’ve covered yet publishing crates to

the crates.io registry we can specify

this parameter here called allow dirty

and this will prevent us from needing to

commit our changes with running this

command so we can go ahead and just say

allow

dirty

I think that’s something we passed a

clippy not cargo so let no it is

actually cargo so let’s move that before

the Double Dash is here

we’ll put the Double Dash after that and

now we should hopefully have a fix here

so as you can see that automatically

fixed our code we can do control Z to

undo that change and then if I just

bring this down a little bit here and

rerun the cargo clippy command you can

see that it automatically fixed that

issue for me so I don’t have to fix that

because maybe I’ve got 100 or 200

different spots in my code where I was

calling tostring unnecessarily that’ll

take care of fixing all of those

instances for me so that I don’t have to

go manually seek them out and resolve

them so that’s a real Time Saver that

you get with the clippy CLI tool so as

you can see it is pretty powerful here

there’s lots of different rules that are

spread across these different groups

right here so this is really nice that

it’s grouped because if you want to look

for performance related issues if maybe

you’re having some performance issues

and you want to try to resolve

specifically performance issues you can

just filter by these performance-based

rules here and see what you can find let

me just clear that search and so all of

these rules right here are performance

related and we can just run clippy

against all these different rules and

see if they apply and also if any of

these rules have configuration options

like this one here we can also go ahead

and specify those inside of our

clippy.tomel file so feel free to play

around with clippy in fact I strongly

encourage you to get some Hands-On time

with clippy maybe run clippy against an

existing rust project if you’ve already

started building your own rust project

and just see what kind of warnings or

errors it throws also make sure that you

incorporate clippy into your CI CD

workflows as well if you use using

something like GitHub actions or gitlab

CI CD or maybe onedev CI CD go into your

configuration files and add this clippy

command as an early step in your process

to catch these errors as early as

possible in your pipelines so that

you’re not waiting a long time for the

build to complete and only then realize

that you have some issues so strongly

encourage you to play around with clippy

play around some of these different

rules play around with some of these

different configuration options you can

use the CLI or you can use the

attribute-based syntax in order to apply

the rule changes so if you decide to go

the CLI route of course you would be

specifying these CLI parameters

in here in your CI CD configuration file

whatever script it is that you’re

running in your CI CD pipeline you’d

basically just add in all these deny and

allow parameters inside of your CLI

script that you’re calling clippy with

and then of course you can always check

for that exit code of 101 to see if

there is an issue or not and then you

could either abort the pipeline or you

could just maybe send an email or a

slack message to your development team

indicating that clippy caught some

issues and then maybe proceed with the

build if you’re comfortable with that

approach but again it’s entirely

customizable to each and every project

that you are writing in Rust so in any

case I think that’s everything that I

wanted to cover around the clippy

utility I strongly encourage especially

beginners to use it because it really

does help guide you on writing better

code it helps you learn more about the

rust language and the standard library

and it’s just a great tool to have in

your back pocket to help improve the

efficiency of your rust builds so again

if you learned something from the this

video please leave a like on this video

please leave a comment down below let me

know what you thought of it and

subscribe to the channel click on that

Bell icon so that you get notifications

when new videos are published and I’d

love to hear your thoughts in General on

this entire playlist thanks for watching

and we’ll see you soon take care

[Music]

so I was curious about which lens rust

programmers actually use in their

programs if you’re not aware you can

annotate your code with uh extra lint

from either the rust compiler or clippy

to be make your code extra

strict so I thought a good way to do

that would be to download every crate

and go and look so I did that that

conveniently there’s a crate called get

all crates made by the famous David tln

uh and it’s perfect for doing research

on every crate and

crates.io once we’ve downloaded

everything the next step is to extract

and search through all the

crates eventually we get a tsv file and

we can use command line tools to extract

the top lens let’s dig into this list

because there are some really

fascinating things in here in the top

spot is deny missing docks now this is a

really nice lint to see at the top deny

missing docs will prevent the compiler

from compiling code where there are any

missing public items without

documentation next on the list is forbid

unsafe code forbid is an extra level

above deny which makes it impossible for

crate authors to opt in to unsafe or

whichever lint uh on a caseby casee

basis so they can’t annotate any

specific item with

allow it’s not a perfect guarantee but

it’s as close as we can

get deny warnings takes the third place

and this is an interesting one because

it’s one I personally don’t

recommend we are promoting all warnings

to erors here and this is on the surface

a very very good thing it means that our

code is very strict however it also

prevents the ability for you to

automatically upgrade your code to a new

version of rust if a new lint is uh

sorry a new warning is introduced to the

language in a in the next version uh

suddenly your CI system will break

without manual intervention so I don’t

think think that is something that

should be added automatically to every

crate let’s not go through the rest of

the list but I would actually like to

point out one more which is missing

debug implementations I think this is

quite useful because it biases you to

think about how your users are accepting

your API we want to make things as easy

for people consuming our crate as

possible and that would mean

implementing traits like hash equality

or partial equality OD uh and debug uh

as frequently as

possible before ending the video I would

just like to show you how to access more

information about each of these lints so

you can make your own choices there are

web pages for both the rust SE lints and

the clippy lint you also have the option

of the command line

uh that’s rust

C- capital W help uh will give you the

very very long list of rust seed lens

for

example I hope this has been a fun and

informative look at clippy lint and rust

SE lint I wish you all the best for your

rust programming Journey my name is Tim

clicks and I am on the planet to build a

better Planet you’re welcome to like

subscribe and and suggest future videos

I’m always interested in your ideas take

care goodbye

Advice for #rustlang devs: Lints to add to your crates

好的,我会按照您的要求整理内容并翻译为中文。以下是整理和翻译后的内容:

我很好奇Rust程序员在他们的程序中实际使用哪些lint。如果你不知道的话,你可以用来自Rust编译器或Clippy的额外lint来注释你的代码,使你的代码更加严格。我认为一个好方法是下载每个crate并查看,所以我这样做了。方便的是,有一个名为get-all-crates的crate,由著名的David tln制作,它非常适合对crates.io上的每个crate进行研究。

一旦我们下载了所有内容,下一步是提取并搜索所有的crates。最终我们得到一个tsv文件,我们可以使用命令行工具来提取最常用的lint。让我们深入研究这个列表,因为其中有一些非常有趣的东西。

在榜首的是deny_missing_docs。看到这个lint排在首位真是太好了。deny_missing_docs会阻止编译器编译任何缺少文档的公共项目的代码。

列表中的下一个是forbid_unsafe_code。forbid是比deny更高一级的限制,它使得crate作者不可能在个别情况下选择使用unsafe或其他任何lint。他们不能用allow注释任何特定项目。这不是一个完美的保证,但这是我们能得到的最接近的保证。

deny_warnings排在第三位,这是一个有趣的lint,因为这是我个人不推荐的一个。我们在这里将所有警告提升为错误,这表面上看是一件非常好的事情。它意味着我们的代码非常严格。然而,它也阻止了你自动将代码升级到新版本Rust的能力。如果在下一个版本中引入了一个新的警告,你的CI系统会在没有人工干预的情况下突然中断。所以我认为这不应该自动添加到每个crate中。