K8s的调度单位是pod,有独立的网卡。

一个pod里面可以有一到多个container,当需要部署多个进程时,非常有用。

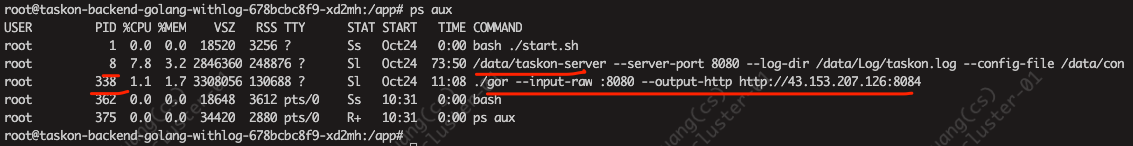

比如现在有一个应用程序进程,一个用于监控其网络流量用于回放的goreply进程 gor,

(现在是进到容器里手工启动gor….)

当然一个pod里可以只有一个container,然后一个container包含多个进程,但这不是最佳实践。

最佳实践是一个pod有多个container,每个container只有一个进程

有点类似sidecar的方式,和taskon-server集成。

需要在dep.yaml里新增

K8s中sidecar是什么?有何作用 在Kubernetes中,Sidecar是一种常见的设计模式,其核心作用是对主应用程序进行扩展和增强。

Sidecar具体特点如下:

Sidecar的典型应用场景包括:

日志收集:用Sidecar容器收集主应用日志并将其发送到远程服务。

监控:用Sidecar容器监控主应用状态,实现应用监控。

代理:用Sidecar做反向代理,实现服务发现、负载均衡等功能。

适配:用Sidecar容器做数据格式转换、协议适配等工作。

综上,Sidecar的作用是对主容器进行扩展,实现一些额外的业务能力,是Kubernetes实现应用高可用和故障处理的关键模式。它利用了Pod的多容器设计,避免了应用程序本身的复杂度。

这是当前我服务的deploy,现在我想使用sidecar的方式,再部署一个container,称为shuang_test,镜像为busybox:1.31.1,需要如何改写?

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 apiVersion: apps/v1 kind: Deployment metadata: annotations: deployment.kubernetes.io/revision: "1" generation: 1 labels: app: taskon-demo-backend name: taskon-demo-backend namespace: benchtaskon spec: progressDeadlineSeconds: 600 replicas: 1 revisionHistoryLimit: 10 selector: matchLabels: app: taskon-demo-backend strategy: rollingUpdate: maxSurge: 25 % maxUnavailable: 25 % type: RollingUpdate template: metadata: creationTimestamp: null labels: app: taskon-demo-backend spec: imagePullSecrets: - name: regcred containers: - image: reg.ont.io/taskon/backend:v1.69.2.sleep imagePullPolicy: IfNotPresent name: taskon-demo-backend resources: {} terminationMessagePath: /dev/termination-log terminationMessagePolicy: File volumeMounts: - name: uploaddata mountPath: /data - name: configjson-volume mountPath: /config dnsPolicy: ClusterFirst restartPolicy: Always schedulerName: default-scheduler securityContext: {} terminationGracePeriodSeconds: 30 volumes: - name: configjson-volume configMap: name: taskon-demo-configjson - name: uploaddata persistentVolumeClaim: claimName: taskondemobackend

最新的yaml:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 apiVersion: apps/v1 kind: Deployment metadata: annotations: deployment.kubernetes.io/revision: "1" generation: 1 labels: app: taskon-demo-backend name: taskon-demo-backend namespace: benchtaskon spec: progressDeadlineSeconds: 600 replicas: 1 revisionHistoryLimit: 10 selector: matchLabels: app: taskon-demo-backend strategy: rollingUpdate: maxSurge: 25 % maxUnavailable: 25 % type: RollingUpdate template: metadata: creationTimestamp: null labels: app: taskon-demo-backend spec: imagePullSecrets: - name: regcred containers: - image: reg.ont.io/taskon/backend:v1.69.2.sleep imagePullPolicy: IfNotPresent name: taskon-demo-backend - name: shuang_test image: busybox:1.31.1 resources: {} terminationMessagePath: /dev/termination-log terminationMessagePolicy: File volumeMounts: - name: uploaddata mountPath: /data - name: configjson-volume mountPath: /config dnsPolicy: ClusterFirst restartPolicy: Always schedulerName: default-scheduler securityContext: {} terminationGracePeriodSeconds: 30 volumes: - name: configjson-volume configMap: name: taskon-demo-configjson - name: uploaddata persistentVolumeClaim: claimName: taskondemobackend

这是当前我服务的deploy,现在我想使用sidecar的方式,再部署一个container,称为goreplay,镜像为ubuntu-with-gor/20231017multistage,启动命令为echo 123; 需要如何改写? 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 apiVersion: apps/v1 kind: Deployment metadata: annotations: deployment.kubernetes.io/revision: "1" generation: 1 labels: app: taskon-demo-backend name: taskon-demo-backend namespace: benchtaskon spec: progressDeadlineSeconds: 600 replicas: 1 revisionHistoryLimit: 10 selector: matchLabels: app: taskon-demo-backend strategy: rollingUpdate: maxSurge: 25 % maxUnavailable: 25 % type: RollingUpdate template: metadata: creationTimestamp: null labels: app: taskon-demo-backend spec: imagePullSecrets: - name: regcred containers: - image: reg.ont.io/taskon/backend:v1.69.2.sleep imagePullPolicy: IfNotPresent name: taskon-demo-backend - name: goreplay image: ubuntu-with-gor/20231017multistage command: ["sh" , "-c" , "echo 123" ] resources: {} terminationMessagePath: /dev/termination-log terminationMessagePolicy: File volumeMounts: - name: uploaddata mountPath: /data - name: configjson-volume mountPath: /config dnsPolicy: ClusterFirst restartPolicy: Always schedulerName: default-scheduler securityContext: {} terminationGracePeriodSeconds: 30 volumes: - name: configjson-volume configMap: name: taskon-demo-configjson - name: uploaddata persistentVolumeClaim: claimName: taskondemobackend

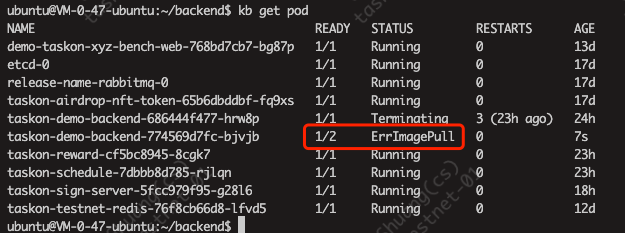

两个pod,其中一个没有启动起来

kb describe pod taskon-demo-backend-774569d7fc-bjvjb

1 2 3 4 5 6 7 8 9 10 11 12 13 Events: Type Reason Age From Message ---- ------ ---- ---- ------- Normal Scheduled 79s default-scheduler Successfully assigned benchtaskon/taskon-demo-backend-774569d7fc-bjvjb to 172.22.0.47 Normal Pulled 77s kubelet Container image "reg.ont.io/taskon/backend:v1.69.2.sleep" already present on machine Normal Created 77s kubelet Created container taskon-demo-backend Normal Started 77s kubelet Started container taskon-demo-backend Normal Pulling 33s (x3 over 77s) kubelet Pulling image "ubuntu-with-gor/20231017multistage" Warning Failed 29s (x3 over 74s) kubelet Failed to pull image "ubuntu-with-gor/20231017multistage": rpc error: code = Unknown desc = Error response from daemon: pull access denied for ubuntu-with-gor/20231017multistage, repository does not exist or may require 'docker login': denied: requested access to the resource is denied Warning Failed 29s (x3 over 74s) kubelet Error: ErrImagePull Normal BackOff 1s (x4 over 73s) kubelet Back-off pulling image "ubuntu-with-gor/20231017multistage" Warning Failed 1s (x4 over 73s) kubelet Error: ImagePullBackOff

看起来这种构建镜像的名称不行, 改个名字,叫 reg.ont.io/taskon/gor:v1.0.0

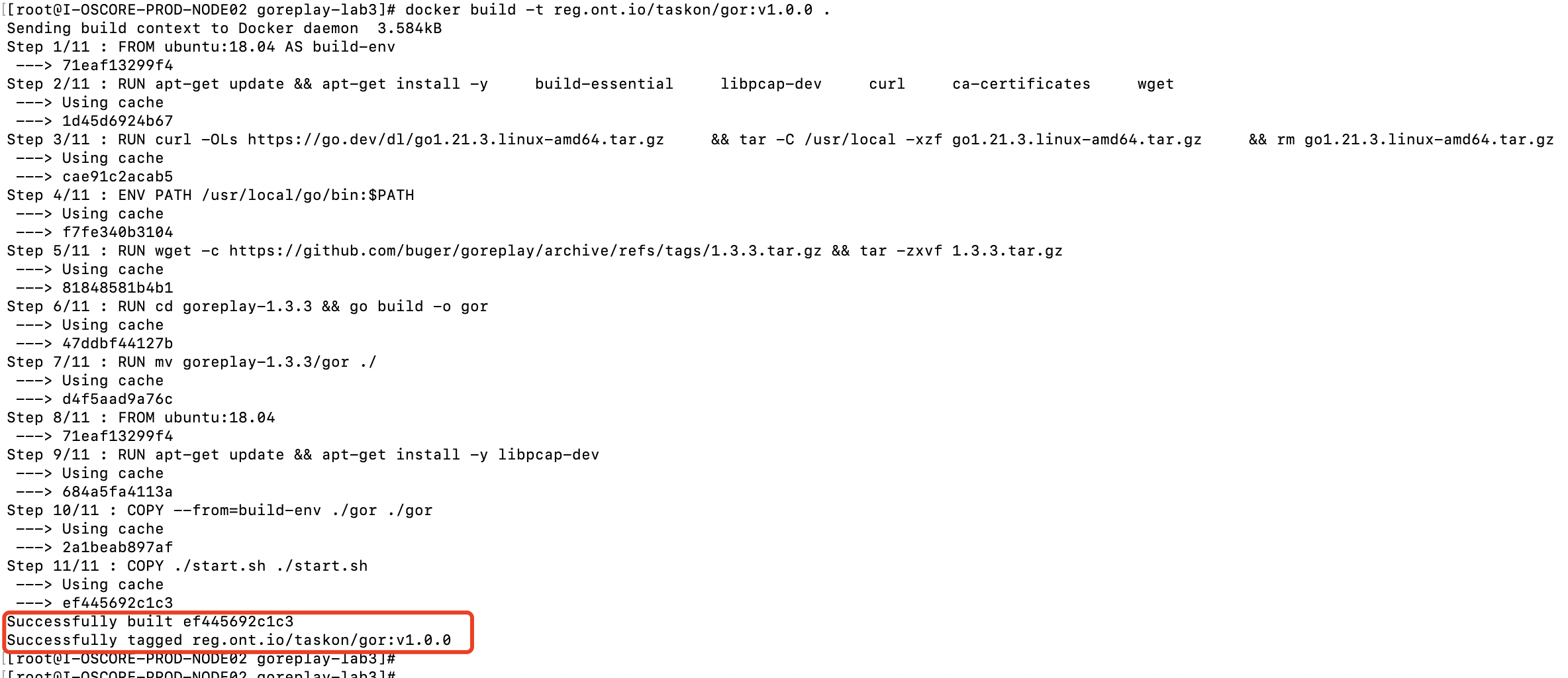

去构建机上执行 docker build -t reg.ont.io/taskon/gor:v1.0.0 .

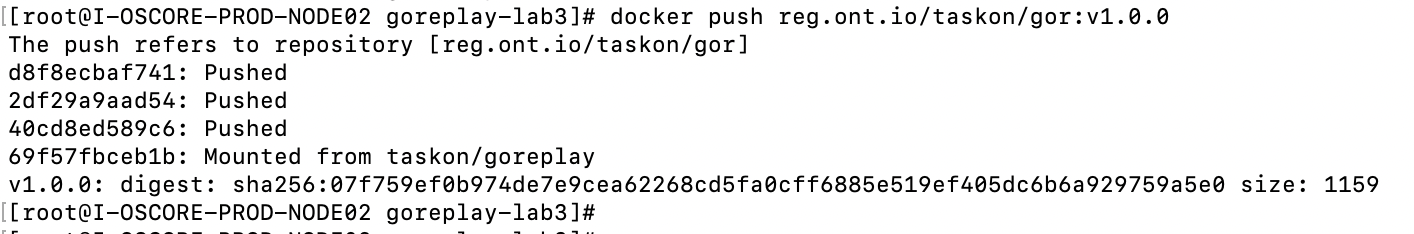

其实是没有push…

少了一步 docker push reg.ont.io/taskon/gor:v1.0.0

kb describe pod taskon-demo-backend-7bf6c5f855-sd787

1 2 3 4 5 6 7 8 9 10 11 12 Events: Type Reason Age From Message ---- ------ ---- ---- ------- Normal Scheduled 29s default-scheduler Successfully assigned benchtaskon/taskon-demo-backend-7bf6c5f855-sd787 to 172.22.0.47 Normal Pulled 29s kubelet Container image "reg.ont.io/taskon/backend:v1.69.2.sleep" already present on machine Normal Created 28s kubelet Created container taskon-demo-backend Normal Started 28s kubelet Started container taskon-demo-backend Normal Pulled 10s (x3 over 28s) kubelet Container image "reg.ont.io/taskon/gor:v1.0.0" already present on machine Normal Created 10s (x3 over 28s) kubelet Created container goreplay Normal Started 9s (x3 over 28s) kubelet Started container goreplay Warning BackOff 9s (x3 over 26s) kubelet Back-off restarting failed container

https://www.google.com/search?q=back-off+restarting+failed+container%E8%A7%A3%E5%86%B3&newwindow=1&sca_esv=576359088&sxsrf=AM9HkKn3bRoEsw27JHxyn_WSDcDduDhDjg%3A1698204196353&ei=JIo4ZbqBFY_f2roPmIeuqAU&oq=Back-off+restarting+failed+container&gs_lp=Egxnd3Mtd2l6LXNlcnAiJEJhY2stb2ZmIHJlc3RhcnRpbmcgZmFpbGVkIGNvbnRhaW5lcioCCAAyChAAGEcY1gQYsAMyChAAGEcY1gQYsAMyChAAGEcY1gQYsAMyChAAGEcY1gQYsAMyChAAGEcY1gQYsAMyChAAGEcY1gQYsAMyChAAGEcY1gQYsAMyChAAGEcY1gQYsAMyChAAGEcY1gQYsAMyChAAGEcY1gQYsANIowtQAFgAcAJ4AZABAJgBAKABAKoBALgBAcgBAOIDBBgAIEGIBgGQBgo&sclient=gws-wiz-serp

Defaulted container "taskon-demo-backend" out of: taskon-demo-backend, goreplay

https://stackoverflow.com/questions/74552547/defaulted-container-container-1-out-of-container-1-container-2

即 默认容器”taskon-demo-backend”来自:taskon-demo-backend, goreplay

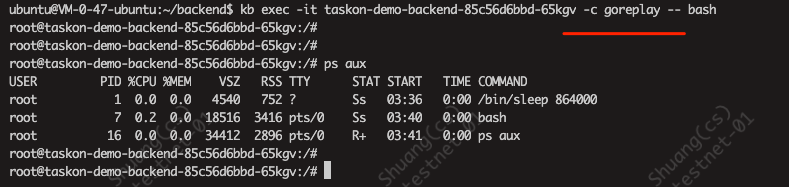

可以使用 xxx -c 容器名称 显示指定

即 kb exec -it taskon-demo-backend-85c56d6bbd-zzjkb -c goreplay -- bash

写错位置了,导致configmap挂载到了goreplay这个容器中。。(论yaml的层次和对齐….)

最终可用的yaml:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 apiVersion: apps/v1 kind: Deployment metadata: annotations: deployment.kubernetes.io/revision: "1" generation: 1 labels: app: taskon-demo-backend name: taskon-demo-backend namespace: benchtaskon spec: progressDeadlineSeconds: 600 replicas: 1 revisionHistoryLimit: 10 selector: matchLabels: app: taskon-demo-backend strategy: rollingUpdate: maxSurge: 25 % maxUnavailable: 25 % type: RollingUpdate template: metadata: creationTimestamp: null labels: app: taskon-demo-backend spec: imagePullSecrets: - name: regcred containers: - image: reg.ont.io/taskon/backend:v1.69.2.sleep imagePullPolicy: IfNotPresent name: taskon-demo-backend resources: {} terminationMessagePath: /dev/termination-log terminationMessagePolicy: File volumeMounts: - name: uploaddata mountPath: /data - name: configjson-volume mountPath: /config - image: reg.ont.io/taskon/gor:v1.0.0 imagePullPolicy: IfNotPresent name: goreplay command: - ./start.sh dnsPolicy: ClusterFirst restartPolicy: Always schedulerName: default-scheduler securityContext: {} terminationGracePeriodSeconds: 30 volumes: - name: configjson-volume configMap: name: taskon-demo-configjson - name: uploaddata persistentVolumeClaim: claimName: taskondemobackend

原文链接: https://dashen.tech/2020/10/25/K8s创建包含多个容器的Pod/

版权声明: 转载请注明出处.

两个pod,其中一个没有启动起来

两个pod,其中一个没有启动起来